📊 tensorflow로 회귀식 구하기

📌 tensorflow로 회귀식 구하기

1. 랜덤 가중치, 편향값 추출

tf.random.set_seed(2) w = tf.Variable(tf.random.normal((1,))) b = tf.Variable(tf.random.normal((1,))) print(w.numpy(), ' ', b.numpy()) # [0.7488816] [0.899998]

2. 옵티마이저 선택

from keras.optimizers import SGD, RMSprop, Adam opti = SGD()

3. 최적의 회귀선 구하기

@tf.function def train_func(x, y): with tf.GradientTape() as tape: hypo = tf.add(tf.multiply(w, x), b) # y = w*x + b loss = tf.reduce_mean(tf.square(hypo - y)) grad = tape.gradient(loss, [w, b]) # 미분을 자동으로 계산 opti.apply_gradients(zip(grad, [w, b])) # 자동 경사하강법 계산 return loss

4. 각 epoch마다 loss값 구하기

w_vals = [] cost_vals = [] epochs = 100 for i in range(1, epochs+1): loss_val = train_func(x, y) cost_vals.append(loss_val.numpy()) w_vals.append(w.numpy()) if i % 10 == 0: print(loss_val)

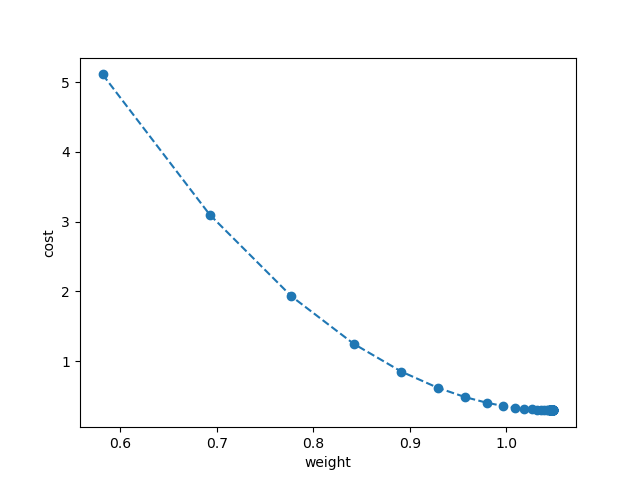

- 5. w, cost를 시각화

plt.plot(w_vals, cost_vals, 'o--') plt.xlabel('weight') plt.ylabel('cost') plt.show()

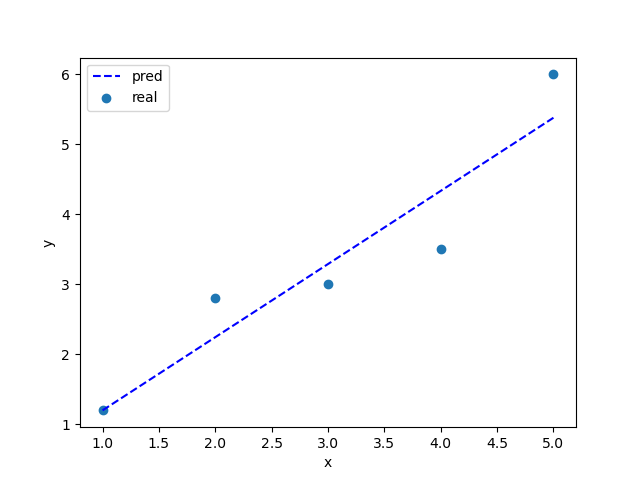

- 6. 선형회귀식 시각화

plt.scatter(x, y, label='real') plt.plot(x, y_pred, '--', c='b', label='pred') plt.xlabel('x') plt.ylabel('y') plt.legend() plt.show()