NLP 연구 분야의 큰 흐름 중 하나는 Attention Mechanism의 활용

Attention is all you need(The Transformer)

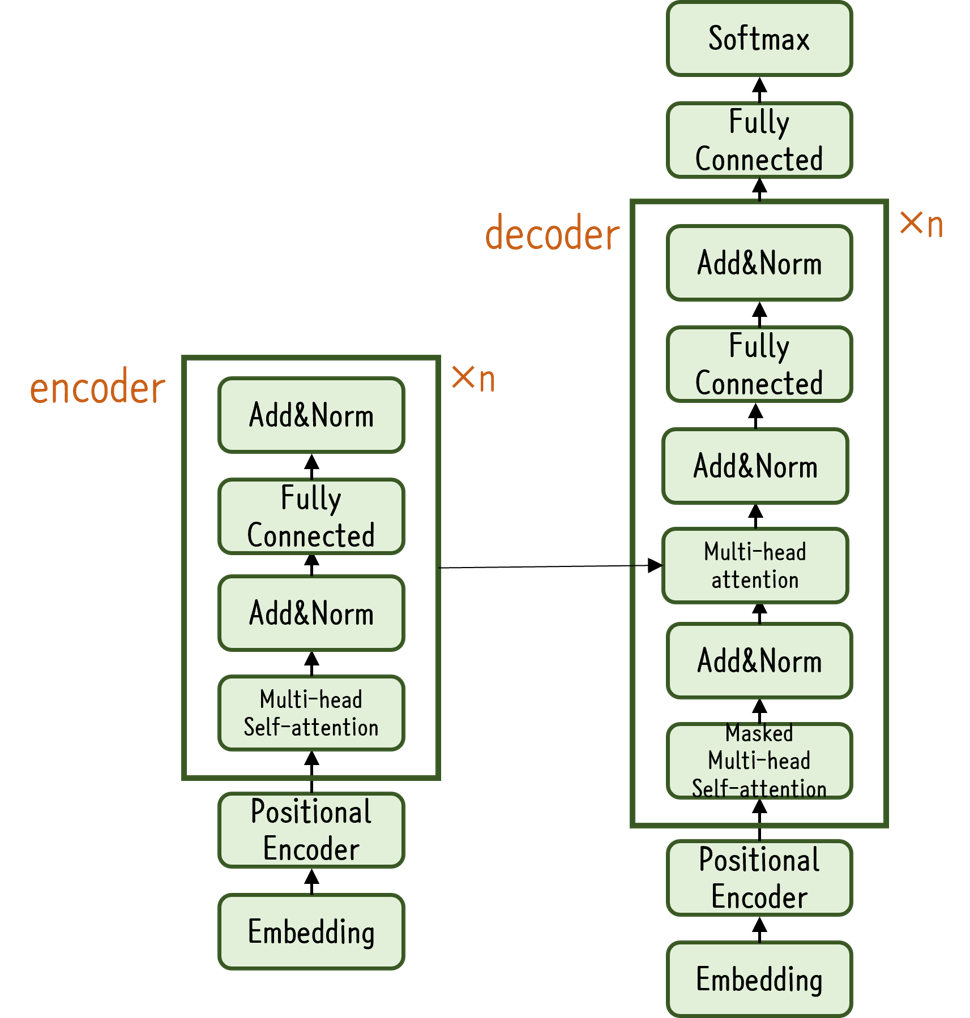

- Encoder-Decoder 형식 보유

- Encoder가 특정 벡터로 정보를 저장하면 Decoder는 해당 정보를 이용해 Output 생성

- RNN 계열 모듈을 사용하지 않고 여러 개의 Attention 모듈을 이어 만듦

- 모든 token을 순서대로 입력 받지 않고 한 번에 받아 처리하기 때문에 학습이 빠름

- Multi-Head Attention과 Feed Forward Layer을 Shortcut으로 묶은 것을 기본 모듈로 사용

- 해당 모듈을 여러번 쌓아둔 Encoder와 Decoder로 구성

- 위치 정보를 주기 위해 Positional Encoding 추가 및 Layer Normalization, 1 Conv Feed Forward Module 사용

- Scaled Dot-Product Attention

- 각각 Query(Q), Key(K), Value(V)로 구성

- Tokenized된 문장에 Token 별로 Embedding Vector와 Position Encoding Vector을 합해 Input으로 사용

- Input에 서로 다른 3개의 Weight 행렬을 곱해 Q,K,V 행렬로 변환

- Q,K,V는 서로 다른 방법으로 표현된 행렬

- 먼저 Q와 K 벡터의 행렬 곱을 통해 벡터 간 거리 계산

- 를 통해 벡터 크기에 대한 영향력 감소(scaling)

- 위 행렬에 Softmax를 취해줌으로써 다른 token의 K Vector 간의 유사도 측정

- 위 점수 행렬과 V 행렬을 곱해 연산

- Token Vector에 대한 상대 점수 가중 평균

- 각각 Query(Q), Key(K), Value(V)로 구성

- Multi-Head Attention Module

- 여러 개(h개)의 모듈(Scaled Dot-Product Attention)로 구성

- 이 h개의 Attention은 서로 다르게 학습돼 문장 속 token끼리의 복잡한 관계 표현

- 이 다양한 정보를 concat하여 Linear Layer에 한 번 더 통과

- 각 시점 별 이후 시점의 정보를 알면 안되므로 Decoder의 Module의 첫번째 계층은 Masked Multi-Head Attention 활용

Pre-Training Model

- Vision 분야에서는 큰 데이터에서 이미 학습을 완료해 놓은 모델을 공개해 공유하면 해당 모델의 끝단만 새로 만들어 내가 가진 데이터와 task에 맞게 Fine-Tuning

- NLP에서는 Word Embedding과 같은 Feature Learning

BERT

- 학습은 일반 문서에서 Feature을 학습하는 Unsupervised Pre-training과 각 task에서 한 번 더 학습시키는 Fine-Tuning과정을 거침

- Unsupervied Pre-training은 일반 문장만 input으로 사용하고 특정한 답이 없어 task와 정답 label을 만들어야 함

- 랜덤으로 몇 개의 token을 가리고 주변 문맥으로 해당 token을 맞추는 task(MLM; Masked Language Model)

- 연이은 문장 Pair인지, 랜덤으로 매칭시킨 Pair 문장인지를 구분하는 task(NSP; Next Sentence Prediction)

- 단어 간의 관계와 문장 단위의 이해를 중점적으로 학습

- 기본적으로 The Transformer를 사용

- 번역을 위한 모델이 아닌 일반적인 Language Model이기 때문에 Transformer의 Encoder 부분만 떼어 모델을 구성

- 문장 내 token끼리의 다양한 연관성을 학습해 적절히 섞여 있는 정보 벡터를 표현

- Token Embedding과 Position Embedding은 그대로 사용

- 2개의 문장을 이어서 input을 받기 때문에 문장을 구분할 수 있는 Segment Embedding 추가

- [SEP], [CLS] Token 추가

- [SEP]는 문장의 끝을 알리는 문장 구분의 목적으로, [CLS]는 문장 시작을 알리는 용도로 추가

- 각 token의 output은 MLM task 해결에 사용하고, [CLS] token의 output은 NSP task 해결에 사용

- Fine-tuning 과정에서도 token의 output은 단어 간 정보를 이용하는 task에, [CLS] token의 output은 문장의 정보를 이용하는 task에 사용

- 대부분 Fully-Connected Layer 1개만 추가해 Fine-tuning

- Pre-training 모델의 output은 새로운 형태의 Embedding

- wor2vec과 달리 문장의 문맥에 따라 Embedding Vector가 달라지는 Contextual Embedding

- 동음이의어 문제 해결

- wor2vec과 달리 문장의 문맥에 따라 Embedding Vector가 달라지는 Contextual Embedding

Pre-Trained BERT Model을 이용한 모델 만들기

!pip install transformers- data 및 task 선택

- Tokenization(+data cleansing)

def PreProcessingText(input_sentence):

input_sentence = input_sentence.lower() # 소문자화

input_sentence = re.sub('<[^>]*>', repl=' ', string = input_sentence) # <br /> 처리

input_sentence = re.sub('[!"$%&\()*+,-./:;<=>?@[\\]^_`{|}~]', repl=' ', string = input_sentence) # ' 제외한 특수문자 처리

input_sentence = re.sub('\s+', repl=' ', string = input_sentence) # 연속된 띄어쓰기 처리

if input_sentence:

return input_sentence

for example in train_data.examples:

vars(example)['text'] = PreProcessingText(' '.join(vars(example)['text'])).split()

for example in test_data.examples:

vars(example)['text'] = PreProcessingText(' '.join(vars(example)['text'])).split()- Pre-Trained Embedding 선택

- Model 선택(RNN, LSTM, GRU, CNN, BERT 등)

- 학습 및 성능 측정

import torch

import torch.nn as nn

from transformers import BertModel

bert = BertModel.from_pretrained('bert-base-uncased') # 원하는 bert 모델 이름 입력해 손쉽게 사용

model_config['emb_dim'] = bert.config.to_dict()['hidden_size'] # bert의 output은 token별로 지정된 hidden layer size의 vector로 나옴

class SentenceClassification(nn.Module):

def __init__(self, **model_config):

super(SentenceClassification, self).__init__()

self.bert = bert

self.fc = nn.Linear(model_config['emb_dim'], model_config['output_dim'])

def forward(self, x):

pooled_cls_output = self.bert(x)[1]

# ([CLS] output, [CLS] Pooled output, token1_output, token2_output, ...)

return self.fc(pooled_cls_output)sequence data의 길이가 다른 경우

- padding: 빈 곳을 <pad>로 채움

- <pad> 토큰이 사용됨

- <pad> 토큰은 처리되면 안되므로 패딩 전용 처리 추가

- Decoder에 입력된 데이터가 <pad>라면 손실의 결과에 반영 x (손실 함수 계층에 마스크 기능 추가)

- Encoder에 입력된 데이터가 <pad>라면 이전 시각의 입력 그대로 출력

- packing: sequence 길이에 대한 정보 저장, pytorch에서는 batch data는 길이 내림차순으로 정렬해야 됨

- <pad> 토큰을 사용하지 않아도 됨

- 구현 시 복잡함

pack_sequence() # packing

pad_sequence() # padding

pad_packed_sequence() # packing된 것을 padding으로 변환

pack_padded_sequence() # padding된 것을 packing으로 변환Seq2Seq모델 구현

import random

import torch

import torch.nn as nn

import torch.optim as optim

torch.manual_seed(0)

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

raw = ['I feel hungry. 나는 배가 고프다.',

'Pytorch is very easy. 파이토치는 매우 쉽다.',

'Pytorch is a framework for deep learning. 파이토치는 딥러닝을 위한 프레임워크이다.',

'Pytorch is very clear to use. 파이토치는 사용하기 매우 직관적이다.']

SOS_token = 0

EOS_token = 1

def preprocess(corpus, source_max_length, target_max_length):

pairs = []

for line in corpus:

pairs.append([s for s in line.strip().lower().split('\t')])

pairs = [pair for pair in pairs if filter_pair(pair, source_max_length, target_max_length)]

source_vocab = Vocab()

target_vocab = Vocab()

for pair in pairs:

source_vocab.add_vocab(pair[0])

target_vocab.add_vocab(pair[1])

return pairs, source_vocab, target_vocab

class Encoder(nn.Module):

def __init__(self, input_size, hidden_size):

super(Encoder, self).__init__()

self.hidden_size = hidden_size

self.embedding = nn.Embedding(input_size, hidden_size) # input_size: corpus의 단어 개수, hidden_size: 몇 차원 벡터로 나타낼 것인가

self.gru = nn.GRU(hidden_size, hidden_size)

def forward(self, x, hidden):

x = self.embedding(x).view(1, 1, -1)

x, hidden = self.gru(x, hidden)

return x, hidden

class Decoder(nn.Module):

def __init__(self, hidden_size, output_size):

super(Decoder, self).__init__()

self.hidden_size = hidden_size

self.embedding = nn.Embedding(output_size, hidden_size)

self.gru = nn.GRU(hidden_size, hidden_size)

self.out = nn.Linear(hidden_size, output_size)

slef.softmax = nn.LogSoftmax(dim=1)

def forward(self, x, hidden):

x = self.embedding(x).view(1,1,-1)

x, hidden = self.gru(x, hidden)

x = self.softmax(self.out(x[0]))

return x, hidden

def tensorize(vocab, sentence): # 문장을 원핫벡터로 바꾼 후 파이토치의 텐서로 변환

indexes = [vocab.vocab2index[word] for word in sentence.split(' ')]

indexes.append(vocab.vocab2index['<EOS>'])

return torch.Tensor(indexes).long().to(device).view(-1,1)

def train(pairs, source_vocab, target_vocab, encoder, decoder, n_iter, print_every=1000, learning_rate=0.01):

loss_total = 0

encoder_optimizer = torch.optim.SGD(encoder.parameters(), lr=learning_rate)

decoder_optimizer = torch.optim.SGD(decoder.parameters(), lr=learning_rate)

training_batch = [random.choice(pairs) for _ in range(n_iter)]

training_source = [tensorize(source_vocab, pair[0]) for pair in training_batch]

training_target = [tensorize(target_vocab, pair[1]) for pair in training_batch]

criterion = nn.NLLLoss()

for i in range(1, n_iter+1):

source_tensor = training_source[i-1]

target_tensor = training_target[i-1]

encoder_hidden = torch.zeros([1,1,encoder.hidden_size]).to(device)

encoder_optimizer.zero_grad()

decoder_optimizer.zero_grad()

source_length = source_tensor.size(0)

target_length = target_tensor.size(0)

loss = 0

for enc_input = in range(source_length):

_, encoder_hidden = encoder(source_tensor[enc_input], encoder_hidden)

decoder_input = torch.Tensor([[SOS_token]]).long().to(device)

decoder_hidden = encoder_hidden

for di in range(target_length):

decoder_output, decoder_hidden = decoder(decoder_input, decoder,hidden)

loss += criterion(decoder_output, target_tensor[di])

decoder_input = target_tensor[di]

loss.backward()

encoder_optimizer.step()

decoder_optimizer.step()

loss_iter = loss.item()/target_length

loss_total += loss_iter

if i % print_every == 0:

loss_avg = loss_total/print_every

loss_total = 0

print('[{} - {}%] loss = {:05.4f}'.format(i, i/n_iter*100, loss_avg))

source_max_length = 10

target_max_length = 12

load_pairs, load_source_vocab, load_target_vocab = preprocess(raw, source_max_length, target_max_length)

print(random.choice(load_pairs))

enc_hidden_size = 16

dec_hidden_size = enc_hidden_size

enc = Encoder(load_source_vocab.n_vocab, enc_hidden_size).to(device)

dec = Decoder(dec_hidden_size, load_target_vocab.n_vocab).to(device)

train(load_pairs, load_source_vocab, load_target_vocab, enc, dec, 5000, print_every=1000)참고

파이썬 딥러닝 파이토치 (이경택, 방성수, 안상준)

모두를 위한 딥러닝 시즌 2 Lab 11-5, 11-6