- node에 label 붙이기

## master node의 label 보기

kevin@k8s-master:~$ kubectl get no k8s-master --show-labels

...node-role.kubernetes.io/control-plane=,...

## node1, node2에 label 붙이기

kevin@k8s-master:~$ kubectl label nodes k8s-node1 node-role.kubernetes.io/worker=worker

kevin@k8s-master:~$ kubectl label nodes k8s-node2 node-role.kubernetes.io/worker=worker

## 확인

kevin@k8s-master:~$ kubectl get no

NAME STATUS ROLES AGE VERSION

k8s-master Ready control-plane 5d18h v1.24.5

k8s-node1 Ready worker 5d18h v1.24.5

k8s-node2 Ready worker 5d18h v1.24.5

## 삭제하기

kevin@k8s-master:~$ kubectl label nodes k8s-node1 node-role.kubernetes.io/worker-

kevin@k8s-master:~$ kubectl label nodes k8s-node2 node-role.kubernetes.io/worker-- 삭제

## object로 삭제

kevin@k8s-master:~$ kubectl delete po mynode-pod1

## 강제 삭제 - warning은 주지만 삭제는 된다

kevin@k8s-master:~$ kubectl delete po mynode-pod2 --grace-period=0 --force

warning: Immediate deletion does not wait for confirmation that the running resource has been terminated. The resource may continue to run on the cluster indefinitely.

pod "mynode-pod2" force deleted

## 파일로 삭제

kevin@k8s-master:~/LABs/mynode$ kubectl delete -f mynode3.yaml

## terminationGracePeriodSeconds 수정

kevin@k8s-master:~/LABs/mynode$ vi mynode.yaml

apiVersion: v1

kind: Pod

metadata:

name: mynode-pod

spec:

containers:

- image: dbgurum/mynode:1.0

name: mynode-container

ports:

- containerPort: 8000

terminationGracePeriodSeconds: 10

## apply

kevin@k8s-master:~/LABs/mynode$ kubectl apply -f mynode.yaml

## 확인

kevin@k8s-master:~/LABs/mynode$ kubectl get po -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

mynode-pod 1/1 Running 0 31s 10.111.156.96 k8s-node1 <none> <none>

## terminationGracePeriodSeconds 확인

kevin@k8s-master:~/LABs/mynode$ kubectl get po mynode-pod -o yaml | grep -i termination

terminationGracePeriodSeconds: 10kubernetes api resource

pod count

pod는 node 하나 당 110개부터 5000개 까지 넣을 수 있다.

모든 node에는 150,000개

모든 container에는 300,000개kevin@k8s-master:~$ kubectl describe no k8s-node1 | grep -i pod pods: 110

taint

NoSchedule : pod를 만들때 master에는 지정되지 않는다.

kevin@k8s-master:~$ kubectl describe no | grep -i taint

Taints: node-role.kubernetes.io/control-plane:NoSchedule

Taints: <none>

Taints: <none>

## nodeSelector로 master에게 할당해준다.

kevin@k8s-master:~/LABs/mynode$ vi mynode.yaml

apiVersion: v1

kind: Pod

metadata:

name: taint-pod

spec:

nodeSelector:

kubernetes.io/hostname: k8s-master

containers:

- image: dbgurum/mynode:1.0

name: mynode-container

ports:

- containerPort: 8000

## apply

kevin@k8s-master:~/LABs/mynode$ kubectl apply -f mynode.yaml

## 상태 확인 - Pending이 되었다.

kevin@k8s-master:~/LABs/mynode$ kubectl get po -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

taint-pod 0/1 Pending 0 7s <none> <none> <none> <none>

## 아예 시작도 못해서 log가 없다.

kevin@k8s-master:~/LABs/mynode$ kubectl logs taint-pod

## 삭제

kevin@k8s-master:~/LABs/mynode$ kubectl delete -f mynode.yamlnode 유지관리

-

taint

: NoSchedule을 걸어서 pod 할당을 방지한다. -

drain

: 특정 node의 모든 pod를 제거한다.

master node를 drain하면?

-> 중요한 5가지 구성요소(kubelet, scheduler, controller, etcd, api-server)를 제외하고 drain 시킨다. -

cordon(<=>uncordon)

: 특정 node에 할당을 방지하지만 기존 pod는 유지한다. -

uncordon

: drain, cordon을 해제한다.

Service object

: docker run에 -p 옵션을 주는 것과 같다.

: kube-proxy를 통해 트래픽을 전달한다.

kompose

docker-compose에서 만든 yaml 코드를 pod.yaml & service.yaml로 변환한다.

이와 유사한 것이 docker-proxy(-p를 주어야 docker proxy가 뜬다.) docker proxy를 추적하기 위해서는 netstat -nlp | grep 8001을 사용하였다. 이 PID를 통해 ps -ef로 조회할 수 있다.

kevin@k8s-master:~$ sudo docker run -d --name=myweb -p 10001:80 nginx:1.23.1-alpine

kevin@k8s-master:~$ sudo netstat -nlp | grep 10001

tcp 0 0 0.0.0.0:10001 0.0.0.0:* LISTEN 387901/docker-proxy

tcp6 0 0 :::10001 :::* LISTEN 387908/docker-proxy

kevin@k8s-master:~$ ps -ef | grep 387901

root 387901 972 0 10:22 ? 00:00:00 /usr/bin/docker-proxy -proto tcp -host-ip 0.0.0.0 -host-port 10001 -container-ip 172.17.0.2 -container-port 80

kevin 388265 349358 0 10:22 pts/1 00:00:00 grep --color=auto 387901Service object가 필요한 이유

ClusterIP, NodePort, LoadBalancer를 사용해야 하는 이유가 무엇일까?

: 외부에서 Pod로 접근하기 위해 Service IP:port를 사용한다.

Service는 Pod에 접근하기 위한 Pod IP:port(endpoint)를 보유하고 있다. -> route

multi pod와 연결(label)시 자체 LB 기능을 수행한다.

동작

- setup

3개의 Pod에 Service를 연결하였다.

service 생성 -> coredns(kube-dns)에 자동 등록된다. -> 서비스명:IP를 등록한다.

외부에서 트래픽이 Service를 통해(service IP:port) 들어오면 endpoint를 통해 pod IP:port로 변환된다.(NAT, NAPT, iptables) 이를 kube-proxy가 해준다. 여기서 kube-proxy는 kube-dns로 부터 정보를 확인한다.

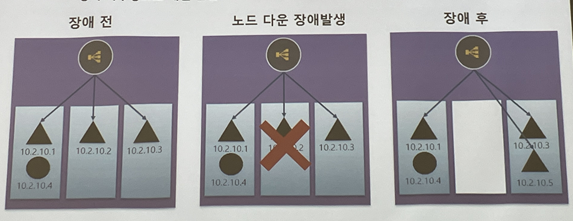

분산 환경(cluster)에서의 Service

k8s 특성상 노드 또는 pod에 장애가 발생할 경우 Pod가 다른 노드 및 다른 IP로 실행

정적 라우팅으로 해결이 안된다.

삼각형은 Replica로 실행되었다. 장애가 발생한 후에는 IP가 바뀐다. 여기에는 Service가 붙어있기 때문에 IP가 바뀌어도 상관이 없다. Service는 pod를 label로 구분하기 때문이다.

kube-proxy 동작모드

ClusterIP

: 내부에서만 사용 가능한 IP를 제공한다.

IP 대역은 --pod-network-cidr(10.96.0.0/12)에서 설정한 대역에서 받는다.(pod IP)

service ip 대역은 --service-cidr(20.96.0.0/13)로 설정할 수 있다.

frontend---backend---db(web-was-db) -> 3-Tier model

frontend(NodePort, LoadBalancer)

backend(ClusterIP)

db(branch에서 접속이 가능하도록. VPN or on-premise(회사 서버실)

## yaml 파일 작성

kevin@k8s-master:~$ vi clustip-test.yaml

apiVersion: v1

kind: Pod

metadata:

name: clusterip-pod

labels:

app: backend

spec:

nodeSelector:

kubernetes.io/hostname: k8s-node1

containers:

- name: container

image: dbgurum/k8s-lab:v1.0

ports:

- containerPort: 8080

---

apiVersion: v1

kind: Service

metadata:

name: clusterip-svc

spec:

selector:

app: backend

ports:

- port: 9000

targetPort: 8080

type: ClusterIP

## apply

kevin@k8s-master:~$ kubectl apply -f clustip-test.yaml

## pod 확인

kevin@k8s-master:~$ kubectl get pod/clusterip-pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

clusterip-pod 1/1 Running 0 13s 10.111.156.97 k8s-node1 <none> <none>

## service 확인

kevin@k8s-master:~$ kubectl get service/clusterip-svc -o yaml | grep IP

clusterIP: 10.111.174.57

clusterIPs:

- IPv4

type: ClusterIP

## service 확인

kevin@k8s-master:~$ kubectl get service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

clusterip-svc ClusterIP 10.111.174.57 <none> 9000/TCP 62s

## endpoint 확인

kevin@k8s-master:~$ kubectl get endpoints clusterip-svc

NAME ENDPOINTS AGE

clusterip-svc 10.111.156.97:8080 77s

## cluster의 모든 node에서 cluster-ip로 조회가 가능하다.

kevin@k8s-node1:~$ sudo curl 10.111.174.57:9000/hostname

Hostname : clusterip-pod

## 외부에서는 조회가 안된다.- service 생성 -> coredns(kube-dns)자동 등록 -> 서비스명:IP

## apply

kevin@k8s-master:~$ kubectl apply -f https://k8s.io/examples/admin/dns/dnsutils.yaml

## 확인

kevin@k8s-master:~$ kubectl get po -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

clusterip-pod 1/1 Running 0 23m 10.111.156.97 k8s-node1 <none> <none>

## nslookup

kevin@k8s-master:~$ kubectl exec -it dnsutils -- nslookup kubernetes.default

Server: 10.96.0.10

Address: 10.96.0.10#53

Name: kubernetes.default.svc.cluster.local

Address: 10.96.0.1

kevin@k8s-master:~$ kubectl get svc -o wide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 2d22h <none>

## nameserver 확인

kevin@k8s-master:~$ kubectl exec -it dnsutils -- cat /etc/resolv.conf

search default.svc.cluster.local svc.cluster.local cluster.local

nameserver 10.96.0.10

options ndots:5

kevin@k8s-master:~$ kubectl get po -n kube-system -l k8s-app=kube-dns

NAME READY STATUS RESTARTS AGE

coredns-6d4b75cb6d-bmsx5 1/1 Running 3 (26h ago) 5d21h

coredns-6d4b75cb6d-jk88g 1/1 Running 3 (26h ago) 5d21h- DNS test

: podIP, serviceIP(ClusterIP)는 DNS에 등록된다. nslookup으로 확인한다.

## yaml 파일 작성

kevin@k8s-master:~/LABs$ vi dns.yaml

apiVersion: v1

kind: Pod

metadata:

name: dns-pod

labels:

dns: verify

spec:

containers:

- image: redis

name: redis-dns

ports:

- containerPort: 6379

---

apiVersion: v1

kind: Service

metadata:

name: dns-svc

spec:

selector:

dns: verify

ports:

- port: 6379

targetPort: 6379

## apply

kevin@k8s-master:~/LABs$ kubectl apply -f dns.yaml

## 확인

kevin@k8s-master:~/LABs$ kubectl get po,svc -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

pod/dns-pod 1/1 Running 0 51s 10.109.131.39 k8s-node2 <none> <none>

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

service/dns-svc ClusterIP 10.110.239.221 <none> 6379/TCP 51s dns=verify

kevin@k8s-master:~/LABs$ kubectl run dnspod-verify --image=busybox --restart=Never --rm -it -- nslookup 10.109.131.39

Server: 10.96.0.10

Address: 10.96.0.10:53

39.131.109.10.in-addr.arpa name = 10-109-131-39.dns-svc.default.svc.cluster.local

pod "dnspod-verify" deleted

kevin@k8s-master:~/LABs$ kubectl run dnspod-verify --image=busybox --restart=Never --rm -it -- nslookup 10.110.239.221

Server: 10.96.0.10

Address: 10.96.0.10:53

221.239.110.10.in-addr.arpa name = dns-svc.default.svc.cluster.local

pod "dnspod-verify" deleted

## pod IP와 cluster IP로 lookup이 가능하다.redis

: in-memory DB

cache로 주로 사용한다.(=memcached)

NodePort

: external. 외부 연결용(Node IP:port)

30000 ~ 32767 범위에서 랜덤으로 모든 노드의 port를 open 한다.

kube-proxy가 자체적으로 route table을 보유한다. 외부 트래픽이 들어오면 NodePort Service로 가게 되고, 대상 Pod로 연결된다.

port는 자동(랜덤) 할당과 수동 지정이 있다.

ports:

- port: 8080 -> 2

targetPort: 80 -> 3

nodePort: 31111 -> 1## yaml 파일 작성

kevin@k8s-master:~/LABs$ vi mynode-pod.yaml

apiVersion: v1

kind: Pod

metadata:

name: nodeport-pod

labels:

app: hi-mynode

spec:

nodeSelector:

kubernetes.io/hostname: k8s-node1

containers:

- name: mynode-container

image: oeckikekk/mynode:1.0

ports:

- containerPort: 8000

---

apiVersion: v1

kind: Service

metadata:

name: nodeport-svc

spec:

selector:

app: hi-mynode

type: NodePort

ports:

- port: 8899

targetPort: 8000

nodePort: 30000

## apply

kevin@k8s-master:~/LABs$ kubectl apply -f mynode-pod.yaml

## 확인

kevin@k8s-master:~/LABs$ kubectl get po -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nodeport-pod 1/1 Running 0 65s 10.111.156.100 k8s-node1 <none> <none>

## service 확인

kevin@k8s-master:~/LABs$ kubectl get service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

nodeport-svc NodePort 10.104.8.56 <none> 8899:30000/TCP 57s

## endpoint 확인

kevin@k8s-master:~/LABs$ kubectl get endpoints nodeport-svc

NAME ENDPOINTS AGE

nodeport-svc 10.111.156.100:8000 77s

## 각 node IP:30000로 모두 접속 가능하다.

kevin@k8s-master:~/LABs$ curl 192.168.56.100:30000/hostname

Welcome to kubernetes~! soyeon

kevin@k8s-master:~/LABs$ curl 192.168.56.101:30000/hostname

Welcome to kubernetes~! soyeon

kevin@k8s-master:~/LABs$ curl 192.168.56.102:30000/hostname

Welcome to kubernetes~! soyeon

## 윈도우에서도 http://192.168.56.101:30000/ 접속 가능하다.## yaml 파일 작성

kevin@k8s-master:~/LABs$ vi lb-pod.yaml

apiVersion: v1

kind: Pod

metadata:

name: lb-pod1

labels:

lb: pod

spec:

nodeSelector:

kubernetes.io/hostname: k8s-node1

containers:

- image: dbgurum/k8s-lab:v1.0

name: pod1

ports:

- containerPort: 8080

---

apiVersion: v1

kind: Pod

metadata:

name: lb-pod2

labels:

lb: pod

spec:

nodeSelector:

kubernetes.io/hostname: k8s-node2

containers:

- image: dbgurum/k8s-lab:v1.0

name: pod2

ports:

- containerPort: 8080

## apply

kevin@k8s-master:~/LABs$ kubectl apply -f lb-pod.yaml

## 확인

kevin@k8s-master:~/LABs$ kubectl get po -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

lb-pod1 1/1 Running 0 8s 10.111.156.101 k8s-node1 <none> <none>

lb-pod2 1/1 Running 0 8s 10.109.131.40 k8s-node2 <none> <none>

## yaml 파일 작성

kevin@k8s-master:~/LABs$ vi lb-svc.yaml

apiVersion: v1

kind: Service

metadata:

name: nodep-svc

spec:

selector:

lb: pod

ports:

- port: 9000

targetPort: 8080

nodePort: 32568

type: NodePort

## apply

kevin@k8s-master:~/LABs$ kubectl apply -f lb-svc.yaml

## 확인

kevin@k8s-master:~/LABs$ kubectl get po,svc -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

pod/lb-pod1 1/1 Running 0 3m33s 10.111.156.101 k8s-node1 <none> <none>

pod/lb-pod2 1/1 Running 0 3m33s 10.109.131.40 k8s-node2 <none> <none>

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

service/nodeport-svc NodePort 10.104.8.56 <none> 8899:30000/TCP 27m app=hi-mynode

## 교차로 나온다

kevin@k8s-master:~/LABs$ curl 192.168.56.101:32568/hostname

Hostname : lb-pod2

kevin@k8s-master:~/LABs$ curl 192.168.56.101:32568/hostname

Hostname : lb-pod1

## 동작 중인 pod의 source 확인

## 교차로 접근하라는 정책이다

kevin@k8s-master:~/LABs$ kubectl edit svc nodep-svc

20 externalTrafficPolicy: Cluster

## 변경한다.

20 externalTrafficPolicy: Local

21 internalTrafficPolicy: Local

## 한 곳으로만 간다

kevin@k8s-master:~/LABs$ curl 192.168.56.101:32568/hostname

Hostname : lb-pod1

kevin@k8s-master:~/LABs$ curl 192.168.56.101:32568/hostname

Hostname : lb-pod1

## 다시 Cluster로 변경하면 1,2가 교차로 나온다.application 설계 시 주요 속성

NodePort: externalTrafficPolicy : Cluster | Local

ClusterIP: sessionAffinity 유사한 속성 -> enable을 시키면 고정값으로 세션을 사용할 수 있다.

LoadBalancer

: EXTERNAL-IP에 public IP를 제공하는 기법이다.

cloud 환경에서 주로 사용한다.

VM 환경에서는 MetalLB를 통해 구현 가능하다.

## yaml 파일 작성

C:\k8s>notepad lb-test.yaml

apiVersion: v1

kind: Pod

metadata:

name: lb-pod

labels:

lb: pod

spec:

containers:

- image: dbgurum/k8s-lab:v1.0

name: pod1

ports:

- containerPort: 8080

---

apiVersion: v1

kind: Service

metadata:

name: nodep-svc

spec:

selector:

lb: pod

ports:

- port: 9000

targetPort: 8080

type: LoadBalancer

## apply

C:\k8s>kubectl apply -f lb-test.yaml

## NodePort 기법이 내장되어 있어서 30000번대의 port가 보인다

## NodePort의 LB 기능을 사용하는 것이다

C:\k8s>kubectl get po,svc -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

pod/lb-pod 1/1 Running 0 52s 10.12.2.8 gke-k8s-cluster-k8s-nodepool-bc541242-jgo3 <none> <none>

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

service/kubernetes ClusterIP 10.16.0.1 <none> 443/TCP 5d19h <none>

service/nodep-svc LoadBalancer 10.16.5.74 34.64.230.225 9000:30979/TCP 51s lb=pod

## 접속 확인

C:\k8s>curl 34.64.230.225:9000/hostname

Hostname : lb-pod## 버전 확인

kevin@k8s-master:~/LABs$ kubectl api-resources | grep -i deploy

deployments deploy apps/v1 true Deployment

## yaml 파일 작성

C:\k8s>notepad lb-deploy-test.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: lb-deploy

labels:

app: lb-app

spec:

replicas: 3

selector:

matchLabels:

app: lb-app

template:

metadata:

labels:

app: lb-app

spec:

containers:

- image: dbgurum/k8s-lab:v1.0

name: lb-container

ports:

- containerPort: 8080

## apply

C:\k8s>kubectl apply -f lb-deploy-test.yaml

## 확인

C:\k8s>kubectl get deploy,po -o wide

NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

deployment.apps/lb-deploy 3/3 3 3 19s lb-container dbgurum/k8s-lab:v1.0 app=lb-app

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

pod/lb-deploy-55d7988bf7-894mc 1/1 Running 0 19s 10.12.2.9 gke-k8s-cluster-k8s-nodepool-bc541242-jgo3 <none> <none>

pod/lb-deploy-55d7988bf7-dx26x 1/1 Running 0 19s 10.12.0.14 gke-k8s-cluster-k8s-nodepool-bc541242-zuc3 <none> <none>

pod/lb-deploy-55d7988bf7-x72qd 1/1 Running 0 19s 10.12.3.8 gke-k8s-cluster-k8s-nodepool-bc541242-o1ji <none> <none>

## yaml 파일 작성

C:\k8s>notepad lb-deploy-svc.yaml

apiVersion: v1

kind: Service

metadata:

name: lb-svc

spec:

selector:

app: lb-app

ports:

- port: 9000

targetPort: 8080

type: LoadBalancer

## apply

C:\k8s>kubectl apply -f lb-deploy-svc.yaml

## 확인

C:\k8s>kubectl get deploy,po,svc -o wide

NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

deployment.apps/lb-deploy 3/3 3 3 3m30s lb-container dbgurum/k8s-lab:v1.0 app=lb-app

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

pod/lb-deploy-55d7988bf7-894mc 1/1 Running 0 3m30s 10.12.2.9 gke-k8s-cluster-k8s-nodepool-bc541242-jgo3 <none> <none>

pod/lb-deploy-55d7988bf7-dx26x 1/1 Running 0 3m30s 10.12.0.14 gke-k8s-cluster-k8s-nodepool-bc541242-zuc3 <none> <none>

pod/lb-deploy-55d7988bf7-x72qd 1/1 Running 0 3m30s 10.12.3.8 gke-k8s-cluster-k8s-nodepool-bc541242-o1ji <none> <none>

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

service/kubernetes ClusterIP 10.16.0.1 <none> 443/TCP 5d21h <none>

service/lb-svc LoadBalancer 10.16.11.38 34.64.230.225 9000:31430/TCP 53s app=lb-app

## LoadBalancing이 되고 있다.

C:\k8s>curl 34.64.230.225:9000/hostname

Hostname : lb-deploy-55d7988bf7-dx26x

C:\k8s>curl 34.64.230.225:9000/hostname

Hostname : lb-deploy-55d7988bf7-894mc

C:\k8s>curl 34.64.230.225:9000/hostname

Hostname : lb-deploy-55d7988bf7-x72qd

## 삭제

C:\k8s>kubectl delete po lb-deploy-55d7988bf7-894mc lb-deploy-55d7988bf7-dx26x

## 확인

C:\Users\user\AppData\Local\Google\Cloud SDK>kubectl get deploy,po,svc -o wide

NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

deployment.apps/lb-deploy 3/3 3 3 6m57s lb-container dbgurum/k8s-lab:v1.0 app=lb-app

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

pod/lb-deploy-55d7988bf7-7blh4 1/1 Running 0 2s 10.12.0.15 gke-k8s-cluster-k8s-nodepool-bc541242-zuc3 <none> <none>

pod/lb-deploy-55d7988bf7-7g4jf 1/1 Running 0 2s 10.12.2.10 gke-k8s-cluster-k8s-nodepool-bc541242-jgo3 <none> <none>

pod/lb-deploy-55d7988bf7-894mc 1/1 Terminating 0 6m57s 10.12.2.9 gke-k8s-cluster-k8s-nodepool-bc541242-jgo3 <none> <none>

pod/lb-deploy-55d7988bf7-dx26x 1/1 Terminating 0 6m57s 10.12.0.14 gke-k8s-cluster-k8s-nodepool-bc541242-zuc3 <none> <none>

pod/lb-deploy-55d7988bf7-x72qd 1/1 Running 0 6m57s 10.12.3.8 gke-k8s-cluster-k8s-nodepool-bc541242-o1ji <none> <none>

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

service/kubernetes ClusterIP 10.16.0.1 <none> 443/TCP 5d21h <none>

service/lb-svc LoadBalancer 10.16.11.38 34.64.230.225 9000:31430/TCP 4m20s app=lb-app

## 삭제

C:\k8s>kubectl delete -f lb-deploy-svc.yaml

C:\k8s>kubectl delete -f lb-depl

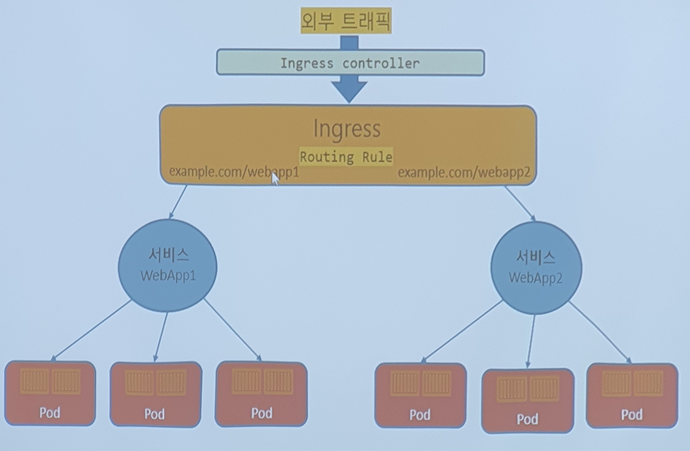

C:\k8s>kubectl delete -f lb-deploy-test.yamlIngress

: L7으로 http(80), https(443 -> tls -> openssl-> *.crt -> Secret 참조 시키기)

Ingress에서 가장 중요한 것은 Rules(smart router). 규칙을 정해놓고, 규칙에 따라 보낼 곳을 정한다.

www.example.com/ -> Service1 -> Pods -> container -> application

www.example.com/customer -> Service2 -> Pods -> container -> application

www.example.com/payment -> Service3 -> Pods -> container -> application

각 url에 따라서 rule을 정해놓고, 각각에 Service를 붙인다. 어떤 페이지로 오느냐에 따라서 맞는 곳으로 보내준다.

=> ingress는 routing 역할을 하는 것이다.

[ingress controller] -> nginx -> nodeport 방식의 proxy로 구성된 서비스

순서

1. ingress controller

제공되는 것 중에서 nginx를 가장 많이 사용한다.

VM이면 Bare metal

NodePort 방식으로 서비스를 분산시켜준다.

2. ingress object

3. service

4. pod

## ingress controller

kevin@k8s-master:~/LABs$ kubectl apply -f https://raw.githubusercontent.com/kubernetes/ingress-nginx/controller-v1.4.0/deploy/static/provider/baremetal/deploy.yaml

## ingress 설치 확인

kevin@k8s-master:~/LABs$ pall

NAMESPACE NAME READY STATUS RESTARTS AGE

ingress-nginx ingress-nginx-admission-create-2lplb 0/1 Completed 0 5m42s

ingress-nginx ingress-nginx-admission-patch-4q6h4 0/1 Completed 1 5m42s

ingress-nginx ingress-nginx-controller-6d68c766c6-w2sjk 0/1 Running 0 5m42s

...

## 확인

kevin@k8s-master:~/LABs$ kubectl get all -n ingress-nginx

NAME READY STATUS RESTARTS AGE

pod/ingress-nginx-admission-create-2lplb 0/1 Completed 0 6m

pod/ingress-nginx-admission-patch-4q6h4 0/1 Completed 1 6m

pod/ingress-nginx-controller-6d68c766c6-w2sjk 1/1 Running 0 6m

## 80과 443 port 확인 할 수 있다

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/ingress-nginx-controller NodePort 10.103.87.127 <none> 80:30697/TCP,443:31624/TCP 6m

service/ingress-nginx-controller-admission ClusterIP 10.99.208.188 <none> 443/TCP 6m

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/ingress-nginx-controller 1/1 1 1 6m

NAME DESIRED CURRENT READY AGE

replicaset.apps/ingress-nginx-controller-6d68c766c6 1 1 1 6m

NAME COMPLETIONS DURATION AGE

job.batch/ingress-nginx-admission-create 1/1 5m10s 6m

job.batch/ingress-nginx-admission-patch 1/1 5m12s 6m

## pod, service

kevin@k8s-master:~/LABs$ cd ingress/

## pod, service yaml 파일 작성

kevin@k8s-master:~/LABs/ingress$ vi ing-pod-svc.yaml

apiVersion: v1

kind: Pod

metadata:

name: h1-pod

labels:

run: hi-app

spec:

containers:

- image: dbgurum/ingress:hi

name: hi-container

args:

- "-text=Hi! kubernetes."

---

apiVersion: v1

kind: Service

metadata:

name: hi-svc

spec:

selector:

run: hi-app

ports:

- port: 5678

## apply

kevin@k8s-master:~/LABs/ingress$ kubectl apply -f ing-pod-svc.yaml

## version 확인

kevin@k8s-master:~/LABs/ingress$ kubectl api-resources | grep -i ingress

ingressclasses networking.k8s.io/v1 false IngressClass

ingresses ing networking.k8s.io/v1 true Ingress

## ingress yaml 파일 작성

kevin@k8s-master:~/LABs/ingress$ vi ingress.yaml

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: http-hi

annotations:

kubernetes.io/ingress.class: "nginx"

ingress.kubernetes.io/rewrite-target: /

spec:

rules:

- http:

paths:

- path: /hi

pathType: Prefix

backend:

service:

name: hi-svc

port:

number: 5678

## apply

kevin@k8s-master:~/LABs/ingress$ kubectl apply -f ingress.yaml

## ingress describe

kevin@k8s-master:~/LABs/ingress$ kubectl describe ingress http-hi

Name: http-hi

Labels: <none>

Namespace: default

Address:

Ingress Class: <none>

Default backend: <default>

Rules:

Host Path Backends

---- ---- --------

*

/hi hi-svc:5678 (10.109.131.47:5678)

Annotations: ingress.kubernetes.io/rewrite-target: /

kubernetes.io/ingress.class: nginx

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Sync 3s nginx-ingress-controller Scheduled for sync

## 확인

kevin@k8s-master:~/LABs/ingress$ kubectl get ingress,po,svc -o wide | grep -i hi

ingress.networking.k8s.io/http-hi <none> * 80 39s

service/hi-svc ClusterIP 10.100.34.127 <none> 5678/TCP 26m run=hi-app

service/nodeport-svc NodePort 10.104.8.56 <none> 8899:30000/TCP 3h18m app=hi-mynode

## 접속 확인

kevin@k8s-master:~/LABs/ingress$ curl 192.168.56.102:30697/hi

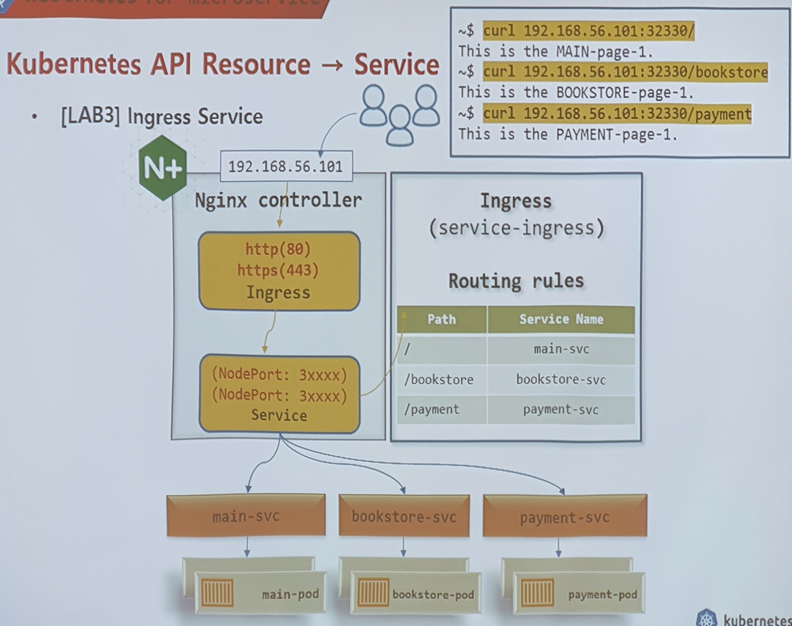

Hi! kubernetes.- ingress Service

## yaml 파일 작성

kevin@k8s-master:~/LABs/ingress$ vi ingress-test.yaml

apiVersion: v1

kind: Pod

metadata:

name: main-pod-1

labels:

run: main-app

spec:

containers:

- image: dbgurum/ingress:hi

name: main-container-1

args:

- "-text=This is the MAIN-page-1."

---

apiVersion: v1

kind: Pod

metadata:

name: main-pod-2

labels:

run: main-app

spec:

containers:

- image: dbgurum/ingress:hi

name: main-container-2

args:

- "-text=This is the MAIN-page-2."

---

apiVersion: v1

kind: Service

metadata:

name: main-svc

spec:

selector:

run: main-app

ports:

- port: 5678

---

apiVersion: v1

kind: Pod

metadata:

name: bookstore-pod-1

labels:

run: bookstore-app

spec:

containers:

- image: dbgurum/ingress:hi

name: bookstore-container-1

args:

- "-text=This is the BOOKSTORE-page-1."

---

apiVersion: v1

kind: Pod

metadata:

name: bookstore-pod-2

labels:

run: bookstore-app

spec:

containers:

- image: dbgurum/ingress:hi

name: bookstore-container-2

args:

- "-text=This is the BOOKSTORE-page-2."

---

apiVersion: v1

kind: Service

metadata:

name: bookstore-svc

spec:

selector:

run: bookstore-app

ports:

- port: 5678

---

apiVersion: v1

kind: Pod

metadata:

name: payment-pod-1

labels:

run: payment-app

spec:

containers:

- image: dbgurum/ingress:hi

name: payment-container-1

args:

- "-text=This is the PAYMENT-page-1."

---

apiVersion: v1

kind: Pod

metadata:

name: payment-pod-2

labels:

run: payment-app

spec:

containers:

- image: dbgurum/ingress:hi

name: payment-container-2

args:

- "-text=This is the PAYMENT-page-2."

---

apiVersion: v1

kind: Service

metadata:

name: payment-svc

spec:

selector:

run: payment-app

ports:

- port: 5678

---

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

name: http-main

annotations:

kubernetes.io/ingress.class: "nginx"

ingress.kubernetes.io/rewrite-target: /

spec:

rules:

- http:

paths:

- path: /

pathType: Prefix

backend:

service:

name: main-svc

port:

number: 5678

- path: /bookstore

pathType: Prefix

backend:

service:

name: bookstore-svc

port:

number: 5678

- path: /payment

pathType: Prefix

backend:

service:

name: payment-svc

port:

number: 5678

## apply

kevin@k8s-master:~/LABs/ingress$ kubectl apply -f ingress-test.yaml

## 연결 확인

kevin@k8s-master:~/LABs/ingress$ curl 192.168.56.102:30697/

kevin@k8s-master:~/LABs/ingress$ curl 192.168.56.102:30697/bookstore

kevin@k8s-master:~/LABs/ingress$ curl 192.168.56.102:30697/payment

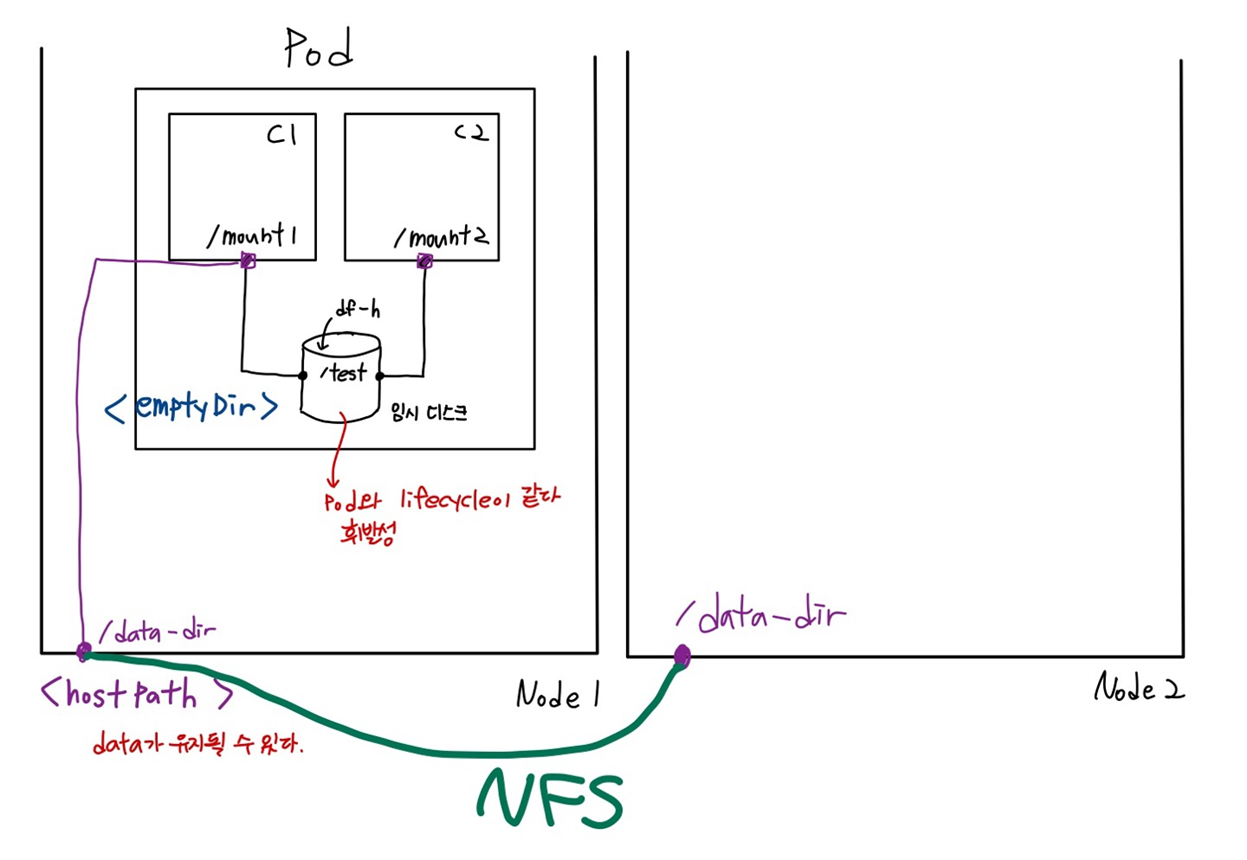

## window에서도 확인 가능Volume and Data

OS 의존성 배제하기 위해 PV, PVC를 사용한다.

storage

ㄴ cloud, github(deprecated)

Volume

ㄴ 공유, 지속성 유지

emptyDir

## 이동

kevin@k8s-master:~/LABs$ mkdir emptydir && cd $_

## yaml 파일 생성

kevin@k8s-master:~/LABs/emptydir$ vi empty-1.yaml

apiVersion: v1

kind: Pod

metadata:

name: vol-pod1

spec:

containers:

- image: dbgurum/k8s-lab:initial

name: c1

volumeMounts:

- name: empty-vol

mountPath: /mount1

- image: dbgurum/k8s-lab:initial

name: c2

volumeMounts:

- name: empty-vol

mountPath: /mount2

volumes:

- name: empty-vol

emptyDir: {}

## apply

kevin@k8s-master:~/LABs/emptydir$ kubectl apply -f empty-1.yaml

## 확인

kevin@k8s-master:~/LABs/emptydir$ kubectl get po -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

vol-pod1 2/2 Running 0 52s 10.109.131.57 k8s-node2 <none> <none>

## pod의 c1으로 들어가기

kevin@k8s-master:~/LABs/emptydir$ kubectl exec -it vol-pod1 -c c1 -- bash

## df -h로 mount 확인

[root@vol-pod1 /]# df -h

Filesystem Size Used Avail Use% Mounted on

/dev/sda1 66G 15G 51G 23% /mount1

...

## mount1에 파일 생성

[root@vol-pod1 /]# cd mount1/

[root@vol-pod1 mount1]# echo 'i love k8s' > k8s-1.txt

[root@vol-pod1 mount1]# cat k8s-1.txt

i love k8s

[root@vol-pod1 mount1]# exit

## pod의 c2로 들어가기

kevin@k8s-master:~/LABs/emptydir$ kubectl exec -it vol-pod1 -c c2 -- bash

## c1에서 생성한 파일을 mount2에서도 확인할 수 있다

[root@vol-pod1 /]# cd mount2/

[root@vol-pod1 mount2]# ls

k8s-1.txt

[root@vol-pod1 mount2]# cat k8s-1.txt

i love k8s

## 밖으로 파일 뽑아내기

kevin@k8s-master:~/LABs/emptydir$ kubectl cp vol-pod1:mount1/k8s-1.txt -c c1 ./k8s-1.txt

kevin@k8s-master:~/LABs/emptydir$ ls

empty-1.yaml k8s-1.txt

## 삭제하고, 다시 apply 해서 들어가면 k8s-1.txt가 남아있지 않다.

kevin@k8s-master:~/LABs/emptydir$ kubectl delete -f empty-1.yaml

kevin@k8s-master:~/LABs/emptydir$ kubectl apply -f empty-1.yaml

kevin@k8s-master:~/LABs/emptydir$ kubectl exec -it vol-pod1 -c c2 -- bash

[root@vol-pod1 /]# cd /mount2/

[root@vol-pod1 mount2]# ls

## describe

kevin@k8s-master:~/LABs/emptydir$ kubectl describe po vol-pod1

...

Containers:

c1:

Mounts:

/mount1 from empty-vol (rw)

c2:

Mounts:

/mount2 from empty-vol (rw)

...

Volumes:

empty-vol:

Type: EmptyDir (a temporary directory that shares a pod's lifetime)

...hostPath

## yaml 파일 생성

kevin@k8s-master:~/LABs/emptydir$ vi hostpath.yaml

apiVersion: v1

kind: Pod

metadata:

name: pod-vol2

spec:

nodeSelector:

kubernetes.io/hostname: k8s-node1

containers:

- name: container

image: dbgurum/k8s-lab:initial

volumeMounts:

- name: host-path

mountPath: /mount1

volumes:

- name: host-path

hostPath:

path: /data_dir

type: DirectoryOrCreate

## apply

kevin@k8s-master:~/LABs/emptydir$ kubectl apply -f hostpath.yaml

## 확인

kevin@k8s-master:~/LABs/emptydir$ kubectl get po -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

pod-vol2 1/1 Running 0 16s 10.111.156.126 k8s-node1 <none> <none>

## pod에 들어가기

kevin@k8s-master:~/LABs/emptydir$ kubectl exec -it pod-vol2 -c container -- bash

## mount 확인

[root@pod-vol2 /]# mount | grep /mount1

/dev/sda1 on /mount1 type xfs (rw,relatime,attr2,inode64,logbufs=8,logbsize=32k,noquota)

## mount1에 파일 생성

[root@pod-vol2 /]# cd /mount1/

[root@pod-vol2 mount1]# echo "i love you" >> k8s-2.txt

[root@pod-vol2 mount1]# cat k8s-2.txt

i love you

[root@pod-vol2 mount1]# exit

## pod가 저장된 node에서 확인

kevin@k8s-node1:~$ cd /data_dir/

kevin@k8s-node1:/data_dir$ ls

k8s-2.txt주의

DirectoryOrCreate가 아닌, Directory를 사용하면 Pod가 실행될 노드의 해당 디렉토리를 미리 생성해야 한다.

- hostPath + NFS를 이용한 주요 데이터 보존과 보호 -> 데이터 지속성

## nginx yaml 파일 작성

kevin@k8s-master:~/LABs/emptydir$ vi nginxvol.yaml

apiVersion: v1

kind: Pod

metadata:

name: weblog-pod

spec:

nodeSelector:

kubernetes.io/hostname: k8s-node2

containers:

- name: nginx-web

image: nginx:1.21-alpine

ports:

- containerPort: 80

volumeMounts:

- name: host-path

mountPath: /var/log/nginx

volumes:

- name: host-path

hostPath:

path: /data_dir/web-log

type: DirectoryOrCreate

## apply

kevin@k8s-master:~/LABs/emptydir$ kubectl apply -f nginxvol.yaml

## 확인

kevin@k8s-master:~/LABs/emptydir$ kubectl get po -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

weblog-pod 1/1 Running 0 18s 10.109.131.59 k8s-node2 <none> <none>

## 접속해보기

kevin@k8s-master:~/LABs/emptydir$ curl 10.109.131.59:80

## node2에서 log를 확인할 수 있다

kevin@k8s-node2:~$ cd /data_dir/web-log/

kevin@k8s-node2:/data_dir/web-log$ ls

access.log error.log

kevin@k8s-node2:/data_dir/web-log$ vi access.log

10.108.82.192 - - [05/Oct/2022:07:55:59 +0000] "GET / HTTP/1.1" 200 615 "-" "curl/7.68.0" "-"

...

## mysql yaml 파일 작성

kevin@k8s-master:~/LABs/emptydir$ vi mysqlvol.yaml

apiVersion: v1

kind: Pod

metadata:

name: mysql-pod

spec:

nodeSelector:

kubernetes.io/hostname: k8s-node2

containers:

- name: mysql-container

image: mysql:8.0

volumeMounts:

- name: host-path

mountPath: /var/lib/mysql

env:

- name: MYSQL_ROOT_PASSWORD

value: "password"

volumes:

- name: host-path

hostPath:

path: /data_dir/mysql-data

type: DirectoryOrCreate

## apply

kevin@k8s-master:~/LABs/emptydir$ kubectl apply -f mysqlvol.yaml

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

mysql-pod 1/1 Running 0 29s 10.109.131.60 k8s-node2 <none> <none>

## pod의 container로 들어가기

kevin@k8s-master:~/LABs/emptydir$ kubectl exec -it mysql-pod -c

## data 추가하기

mysql-container -- mysql -u root -p

mysql> create databases test;

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| mysql |

| performance_schema |

| sys |

| test |

+--------------------+

mysql> use test;

mysql> create table products(prod_id int, prod_name varchar(20));

mysql> insert into products values(1, 'phone');

mysql> select * from products;

+---------+-----------+

| prod_id | prod_name |

+---------+-----------+

| 1 | phone |

+---------+-----------+

## node2에 마운트 된 것 확인

kevin@k8s-node2:/data_dir$ cd /data_dir/mysql-data/

kevin@k8s-node2:/data_dir/mysql-data$ ls

auto.cnf binlog.index client-cert.pem '#ib_16384_1.dblwr' ibtmp1 mysql performance_schema server-cert.pem test

binlog.000001 ca-key.pem client-key.pem ib_buffer_pool '#innodb_redo' mysql.ibd private_key.pem server-key.pem undo_001

binlog.000002 ca.pem '#ib_16384_0.dblwr' ibdata1 '#innodb_temp' mysql.sock public_key.pem sys undo_002

## pod를 지웠다가 다시 apply

kevin@k8s-master:~/LABs/emptydir$ kubectl delete -f mysqlvol.yaml

kevin@k8s-master:~/LABs/emptydir$ kubectl apply -f mysqlvol.yaml

## pod의 container로 들어가기

kevin@k8s-master:~/LABs/emptydir$ kubectl exec -it mysql-pod -c mysql-container -- mysql -u root -p

## 지웠다가 다시 생성해도 데이터가 유지되고 있다.

mysql> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| mysql |

| performance_schema |

| sys |

| test |

+--------------------+

mysql> use test;

mysql> select * from products;

+---------+-----------+

| prod_id | prod_name |

+---------+-----------+

| 1 | phone |

+---------+-----------+