Decision Tree(Feat. Wine)

DecisionTreeGridSearchCVMinMaxScalerRobustScalerStandardScalerStratified KFoldkfoldpipelinewine와인의사결정나무파이프라인하이퍼파라미터

0

Part 09. Machine Learning

목록 보기

4/13

해당 글은 제로베이스데이터스쿨 학습자료를 참고하여 작성되었습니다

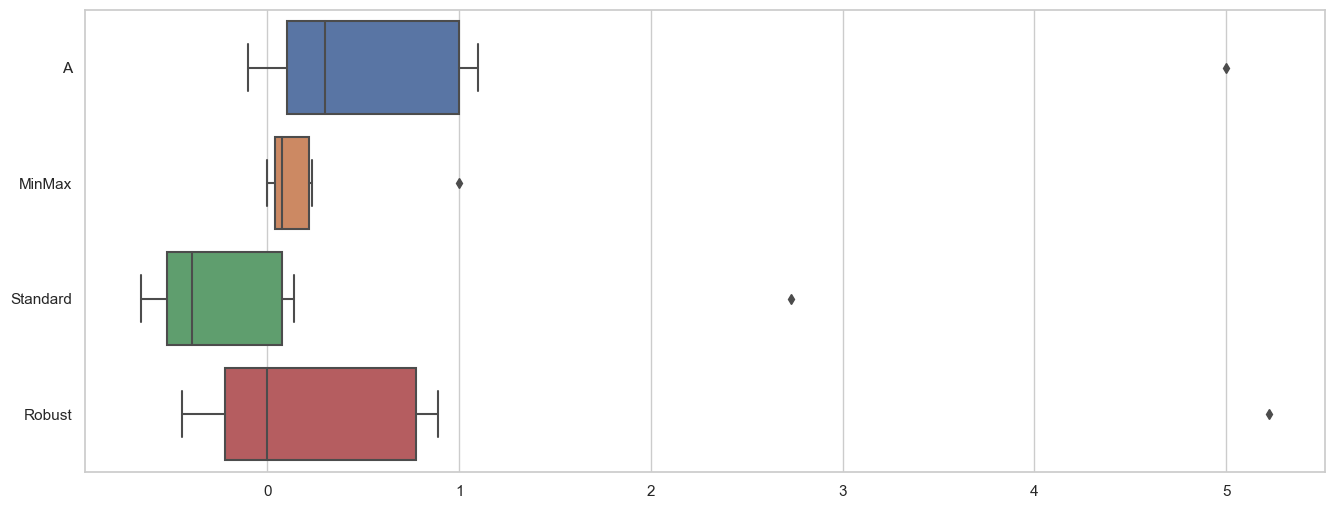

Scaling

MinMaxScaler

- 0 ~ 1로 스케일 조정

- 이상치의 영향이 큼

StandardScaler

- 기본스케일. 평균과 표준편차로 스케일링

- 이상치의 영향이 큼

RobustScaler

- 중간값과 사분위수 사용

- 이상치 영향 최소화

# 3개의 모델을 선언

from sklearn.preprocessing import MinMaxScaler, StandardScaler, RobustScaler

mm = MinMaxScaler()

ss = StandardScaler()

rs = RobustScaler()

# 하나의 데이터프레임에 저장

df_scaler = df.copy()

df_scaler['MinMax'] = mm.fit_transform(df)

df_scaler['Standard'] = ss.fit_transform(df)

df_scaler['Robust'] = rs.fit_transform(df)

df_scaler

--------------------------------------

A MinMax Standard Robust

0 -0.1 0.000000 -0.656688 -0.444444

1 0.0 0.019608 -0.590281 -0.333333

2 0.1 0.039216 -0.523875 -0.222222

3 0.2 0.058824 -0.457468 -0.111111

4 0.3 0.078431 -0.391061 0.000000

5 0.4 0.098039 -0.324655 0.111111

6 1.0 0.215686 0.073785 0.777778

7 1.1 0.235294 0.140192 0.888889

8 5.0 1.000000 2.730051 5.222222# 데이터 시각화

import seaborn as sns

import matplotlib.pyplot as plt

sns.set_theme(style="whitegrid")

plt.figure(figsize=(16,6))

sns.boxplot(data=df_scaler, orient="h")

plt.show()

와인

개요

- 와인은 당도, 타닌, 산도, 알콜, 향기, 풍미, 바디감, 맛 등 굉장히 많은 분류가 있다

- 이 많은 분류를 모두 하는 것은 어렵고 레드와인과 화이트와인으로 분류해보자

목표

- 레드와인, 화이트와인 분류하기

절차

- 데이터 이해

- Decision Tree 활용

- 이진분류(레드&화이트)

- PipeLine

- 하이퍼파라미터

- K-Fold 교차검증

- Stratified K-Fold 교차검증

- GridSearchCV

와인 데이터 가져오기

# 와인 데이터 가져오기

import pandas as pd

red_url = 'https://raw.githubusercontent.com/Pinkwink/ML_tutorial/master/dataset/winequality-red.csv'

white_url = 'https://raw.githubusercontent.com/Pinkwink/ML_tutorial/master/dataset/winequality-white.csv'

red_wine = pd.read_csv(red_url, sep=';')

white_wine = pd.read_csv(white_url, sep=';')

red_wine.head()

white_wine.head()

# 와인 데이터 통합

red_wine['color']=1.

white_wine['color']=0.

wine = pd.concat([red_wine, white_wine])

wine.info()

----------------------------------------------------

<class 'pandas.core.frame.DataFrame'>

Int64Index: 6497 entries, 0 to 4897

Data columns (total 13 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 fixed acidity 6497 non-null float64

1 volatile acidity 6497 non-null float64

2 citric acid 6497 non-null float64

3 residual sugar 6497 non-null float64

4 chlorides 6497 non-null float64

5 free sulfur dioxide 6497 non-null float64

6 total sulfur dioxide 6497 non-null float64

7 density 6497 non-null float64

8 pH 6497 non-null float64

9 sulphates 6497 non-null float64

10 alcohol 6497 non-null float64

11 quality 6497 non-null int64

12 color 6497 non-null float64

dtypes: float64(12), int64(1)

memory usage: 710.6 KB1) 데이터 이해

칼럼 확인

• fixed acidity : 고정 산도

• volatile acidity : 휘발성 산도

• citric acid : 시트르산

• residual sugar : 잔류 당분

• chlorides : 염화물

• free sulfur dioxide : 자유 이산화황

• total sulfur dioxide : 총 이산화황

• density : 밀도

• pH

• sulphates : 황산염

• alcohol

• quality : 0 ~ 10 (높을 수록 좋은 품질)

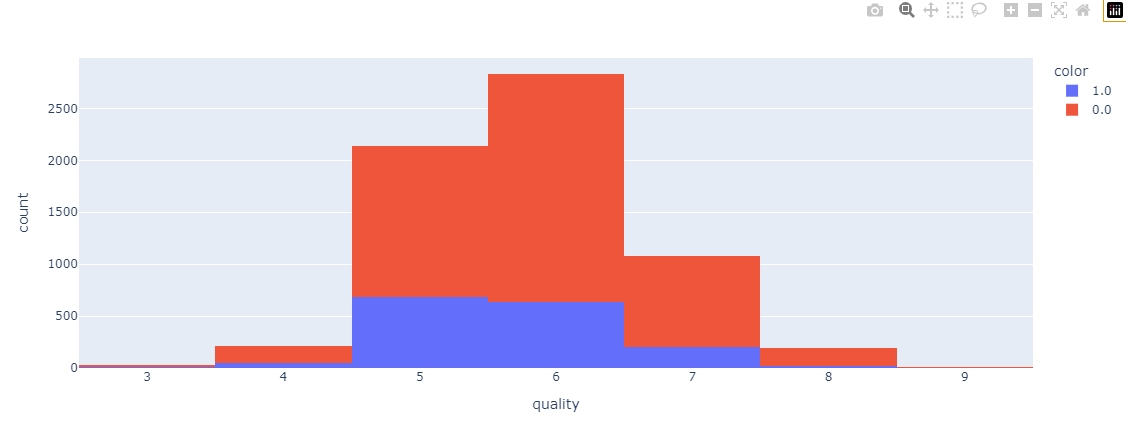

와인 등급 시각화

import plotly.express as px

fig = px.histogram(data_frame=wine, x='quality', color='color')

fig.show()

2) DecisionTree 활용

데이터 분리

특성데이터와 라벨데이터 분리

X = wine.drop(['color'], axis=1)

y = wine['color']학습용 평가용 데이터 분리

# 학습용과 평가용을 8:2로 분리

from sklearn.model_selection import train_test_split

import numpy as np

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=13)

# 훈련용 정답 데이터 확인

np.unique(y_train, return_counts=True)

----------------------------------------------------

# 학습용 라벨데이터 : 화이트와인 3913개, 레드와인 1284개

(array([0., 1.]), array([3913, 1284], dtype=int64))데이터 분류 확인

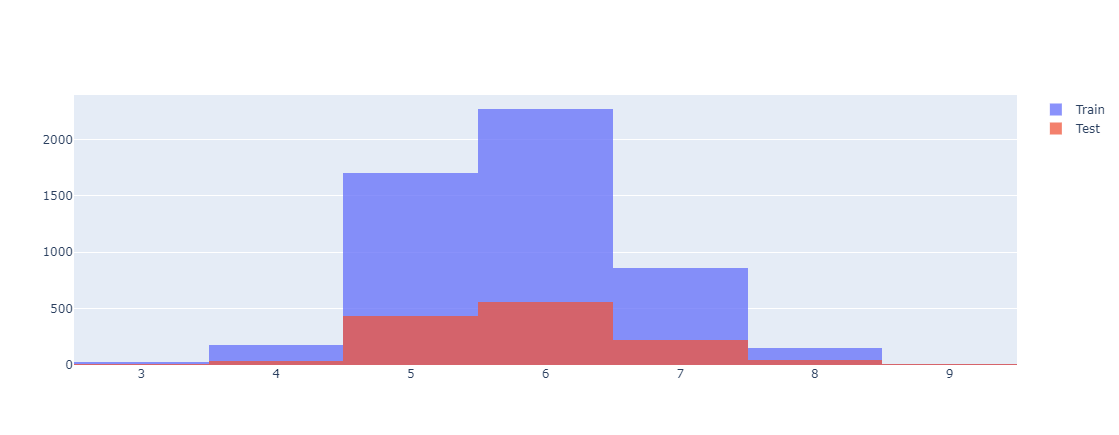

import plotly.graph_objects as go

fig = go.Figure()

fig.add_trace(go.Histogram(x=X_train['quality'], name='Train'))

fig.add_trace(go.Histogram(x=X_test['quality'], name='Test'))

fig.update_layout(barmode='overlay')

fig.update_traces(opacity=0.75)

fig.show()

모델학습

기본 모델 학습

from sklearn.tree import DecisionTreeClassifier

wine_tree = DecisionTreeClassifier(max_depth=2, random_state=13)

wine_tree.fit(X_train, y_train)from sklearn.metrics import accuracy_score

y_pred_tr = wine_tree.predict(X_train)

y_pred_te = wine_tree.predict(X_test)

print('Train ACC : ', accuracy_score(y_train, y_pred_tr))

print('Test Acc : ', accuracy_score(y_test, y_pred_te))

-----------------------------------------------------------------

Train ACC : 0.9553588608812776

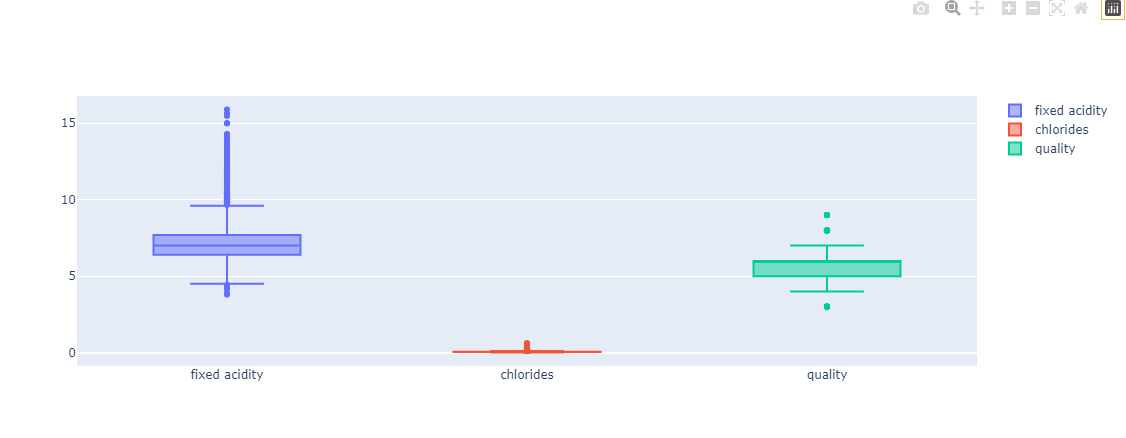

Test Acc : 0.9569230769230769특정항목 시각화

fig = go.Figure()

fig.add_trace(go.Box(y=X['fixed acidity'], name='fixed acidity'))

fig.add_trace(go.Box(y=X['chlorides'], name='chlorides'))

fig.add_trace(go.Box(y=X['quality'], name='quality'))

fig.show()

전처리

스케일러 적용

# 참고사항

# 결정나무에서는 이런 전처리는 의미를 가지지 않는다(현재는 학습용)

from sklearn.preprocessing import MinMaxScaler, StandardScaler

MMS = MinMaxScaler()

SS = StandardScaler()

MMS.fit(X)

SS.fit(X)

X_mms = MMS.transform(X)

X_ss = SS.transform(X)

X_mms_pd = pd.DataFrame(X_mms, columns = X.columns)

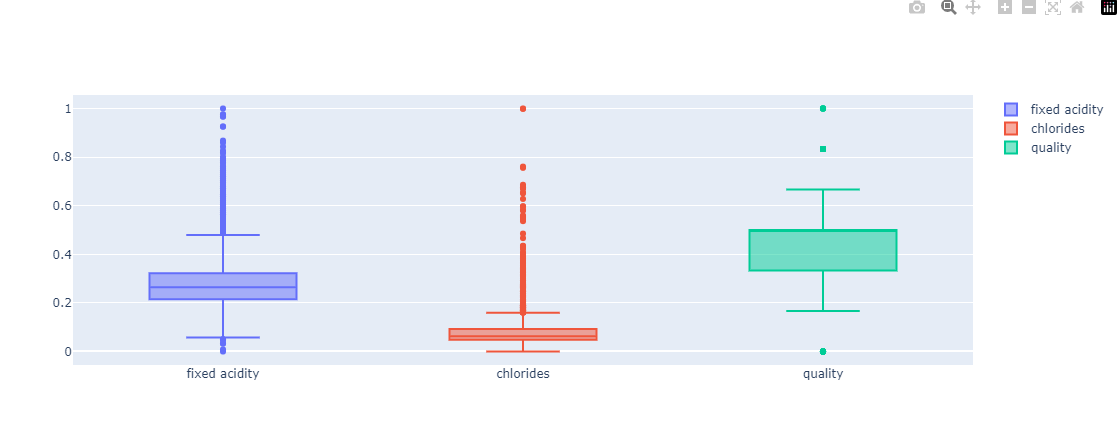

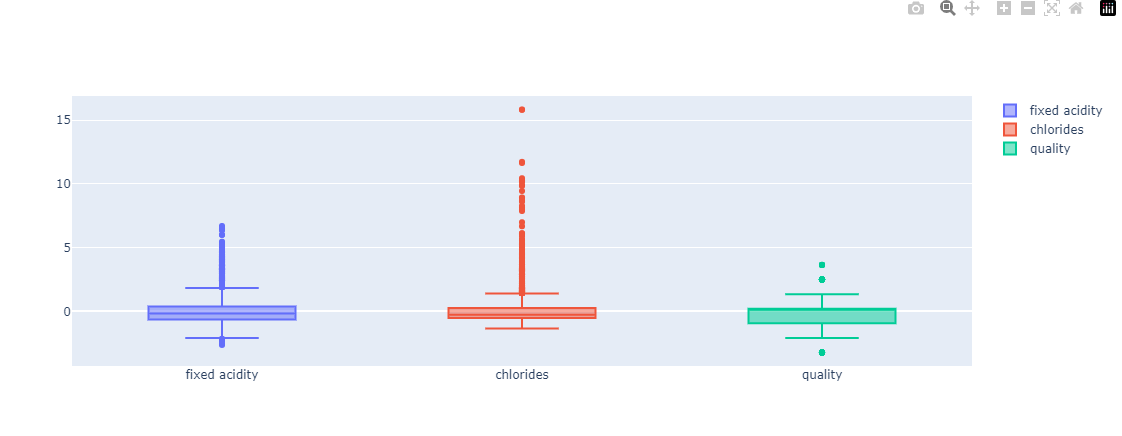

X_ss_pd = pd.DataFrame(X_ss, columns = X.columns)# 시각화 함수

def px_box(target_df):

fig = go.Figure()

fig.add_trace(go.Box(y=target_df['fixed acidity'], name='fixed acidity'))

fig.add_trace(go.Box(y=target_df['chlorides'], name='chlorides'))

fig.add_trace(go.Box(y=target_df['quality'], name='quality'))

fig.show()px_box(X_mms_pd)

px_box(X_ss_pd)

각 전처리 방법별 모델 생성 및 평가 함수

def evaluate(target_df):

X_train, X_test, y_train, y_test = train_test_split(target_df, y, test_size=0.2, random_state=13)

wine_tree = DecisionTreeClassifier(max_depth=2, random_state=13)

wine_tree.fit(X_train, y_train)

y_pred_tr = wine_tree.predict(X_train)

y_pred_test = wine_tree.predict(X_test)

print('Train Acc : ', accuracy_score(y_train, y_pred_tr))

print('Test Acc : ', accuracy_score(y_test, y_pred_test))스케일러 평가

# MinMaxScaler 평가

evaluate(X_mms_pd)

-------------------------------

Train Acc : 0.9553588608812776

Test Acc : 0.9569230769230769# StandardScaler 평가

evaluate(X_ss_pd)

--------------------------------

Train Acc : 0.9553588608812776

Test Acc : 0.9569230769230769중요특성 확인

dict(zip(X_train.columns, wine_tree.feature_importances_))

----------------------------------------------------------

{'fixed acidity': 0.0,

'volatile acidity': 0.0,

'citric acid': 0.0,

'residual sugar': 0.0,

'chlorides': 0.24230360549660776,

'free sulfur dioxide': 0.0,

'total sulfur dioxide': 0.7576963945033922,

'density': 0.0,

'pH': 0.0,

'sulphates': 0.0,

'alcohol': 0.0,

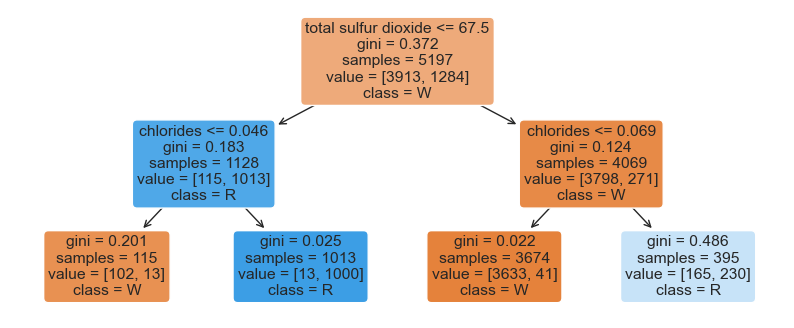

'quality': 0.0}DecisionTree 시각화

from sklearn.tree import plot_tree

import matplotlib.pyplot as plt

plt.figure(figsize=(10,4))

plot_tree(wine_tree, filled=True, feature_names=X_train.columns, class_names=['W', 'R'], rounded=True)

plt.show()

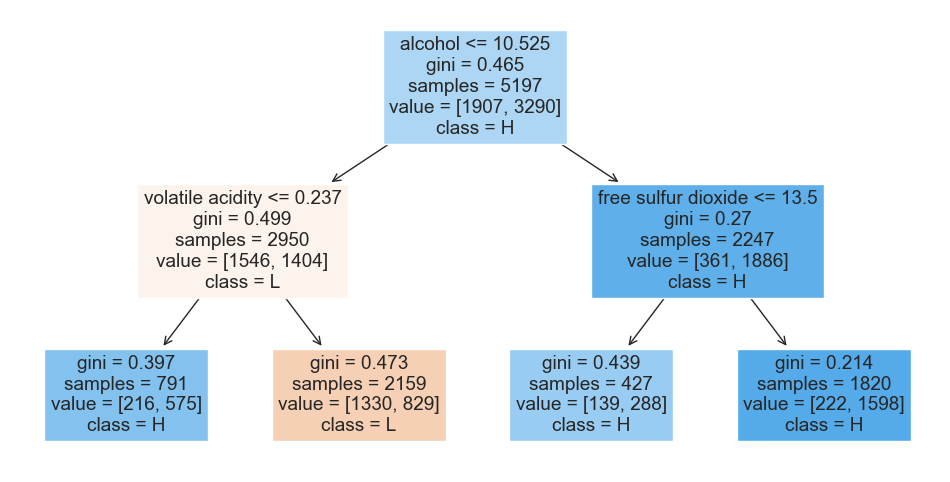

3) 이진분류

quality 칼럼 이진화

wine['taste'] = [1. if grade>5 else 0. for grade in wine['quality']]

wine.info()

----------------------------------------------------

<class 'pandas.core.frame.DataFrame'>

Int64Index: 6497 entries, 0 to 4897

Data columns (total 14 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 fixed acidity 6497 non-null float64

1 volatile acidity 6497 non-null float64

2 citric acid 6497 non-null float64

3 residual sugar 6497 non-null float64

4 chlorides 6497 non-null float64

5 free sulfur dioxide 6497 non-null float64

6 total sulfur dioxide 6497 non-null float64

7 density 6497 non-null float64

8 pH 6497 non-null float64

9 sulphates 6497 non-null float64

10 alcohol 6497 non-null float64

11 quality 6497 non-null int64

12 color 6497 non-null float64

13 taste 6497 non-null float64

dtypes: float64(13), int64(1)

memory usage: 761.4 KBDecisionTree 학습 및 시각화

- 'taste'의 원본인 'quality'도 같이 제거

X = wine.drop(['quality', 'taste'], axis=1)

y = wine['taste']

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=13)

wine_tree = DecisionTreeClassifier(max_depth=2, random_state=13)

wine_tree.fit(X_train, y_train)

y_pred_tr = wine_tree.predict(X_train)

y_pred_test = wine_tree.predict(X_test)

print('Train Acc : ', accuracy_score(y_train, y_pred_tr))

print('Test Acc : ', accuracy_score(y_test, y_pred_test))

plt.figure(figsize=(12,6))

plot_tree(wine_tree, filled=True, feature_names=X_train.columns, class_names=['L','H'])

plt.show()

---------------------------------

Train Acc : 0.7294593034442948

Test Acc : 0.7161538461538461

4) PipeLine

- 매번 같은 작업을 반복 수행해야 할 때, 오류가 발생할 수 있다.

- 파이프라인으로 처음 선언해두면 계속해서 사용가능하다.

PipeLine 구성

# 와인 데이터 가져오기

import pandas as pd

red_url = 'https://raw.githubusercontent.com/Pinkwink/ML_tutorial/master/dataset/winequality-red.csv'

white_url = 'https://raw.githubusercontent.com/Pinkwink/ML_tutorial/master/dataset/winequality-white.csv'

red_wine = pd.read_csv(red_url, sep=';')

white_wine = pd.read_csv(white_url, sep=';')

red_wine['color']=1.

white_wine['color']=0.

wine = pd.concat([red_wine, white_wine])

X = wine.drop(['color'], axis=1)

y = wine['color']# 파이프라인 구성

from sklearn.pipeline import Pipeline

from sklearn.tree import DecisionTreeClassifier

from sklearn.preprocessing import StandardScaler

estimators = [

('scaler', StandardScaler()),

('clf', DecisionTreeClassifier())

]

pipe = Pipeline(estimators)

pipe.steps

-----------------------------------------------------------------

[('scaler', StandardScaler()), ('clf', DecisionTreeClassifier())]내부 모델 속성 설정

pipe.set_params(clf__max_depth=2)

pipe.set_params(clf__random_state=13)

데이터분류

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=13, stratify=y)

pipe.fit(X_train, y_train)학습결과 확인

from sklearn.metrics import accuracy_score

y_pred_tr = pipe.predict(X_train)

y_pred_test = pipe.predict(X_test)

print('Train Acc : ', accuracy_score(y_train, y_pred_tr))

print('Test Acc : ', accuracy_score(y_test, y_pred_test))

---------------------------------------------------------

Train Acc : 0.9657494708485664

Test Acc : 0.95769230769230775) 하이퍼파라미터

- 학습 과정을 제어하는 사용되는 파라미터

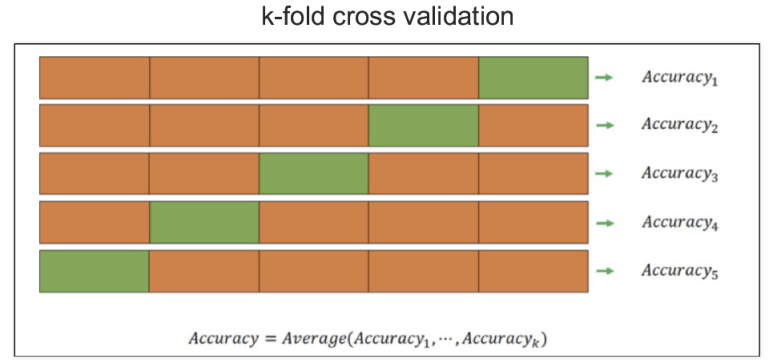

5-1) K-Fold 교차검증

- 과적합을 피하기 위한 교차검증

- 검증데이터를 바꿔가면서 분할 횟수만큼 검증

- 데이터가 편향되어 있는 경우, 학습데이터에 타겟이 포함되지 않을 수 있음

- 학습용데이터를 K개로 분할하여 학습하는 것

KFold 예시

# 예시데이터 활용

import numpy as np

from sklearn.model_selection import KFold

X = np.array([

[1,2], [3,4], [5,6], [7,8]

])

y = np.array([1,2,3,4])

kf = KFold(n_splits=2) # 분할 횟수 결정

print(kf.get_n_splits(X))

print(kf,'\n')

for train_idx, test_idx in kf.split(X):

print('--- idx')

print(train_idx, test_idx)

print('--- train data')

print(X[train_idx])

print('--- val data')

print(X[test_idx])

---------------------------------------------------

2

KFold(n_splits=2, random_state=None, shuffle=False)

--- idx

[2 3] [0 1]

--- train data

[[5 6]

[7 8]]

--- val data

[[1 2]

[3 4]]

--- idx

[0 1] [2 3]

--- train data

[[1 2]

[3 4]]

--- val data

[[5 6]

[7 8]]데이터 가져오기

# 와인데이터 가져오기

import pandas as pd

red_url = 'https://raw.githubusercontent.com/Pinkwink/ML_tutorial/master/dataset/winequality-red.csv'

white_url = 'https://raw.githubusercontent.com/Pinkwink/ML_tutorial/master/dataset/winequality-white.csv'

red_wine = pd.read_csv(red_url, sep=';')

white_wine = pd.read_csv(white_url, sep=';')

red_wine['color'] = 1.

white_wine['color'] = 0.

wine = pd.concat([red_wine, white_wine])

wine['taste'] = [1. if grade>5 else 0. for grade in wine['quality']]

X = wine.drop(['taste', 'quality'], axis=1)

y = wine['taste']KFold 적용

from sklearn.model_selection import KFold

from sklearn.tree import DecisionTreeClassifier

kfold = KFold(n_splits=5) # 분할 갯수 K 결정

wine_tree_cv = DecisionTreeClassifier(max_depth=2, random_state=13)KFold 분할방법

# KFold의 분할방법확인 : kfold는 index를 반환한다.

for train_idx, test_idx in kfold.split(X):

print(len(train_idx), len(test_idx))

------------------------------------------

5197 1300

5197 1300

5198 1299

5198 1299

5198 1299KFold 평가

# 각각의 fold로 학습 후 평가

from sklearn.metrics import accuracy_score

cv_accuracy = []

for train_idx, test_idx in kfold.split(X):

X_train, X_test = X.iloc[train_idx], X.iloc[test_idx]

y_train, y_test = y.iloc[train_idx], y.iloc[test_idx]

wine_tree_cv.fit(X_train, y_train)

pred = wine_tree_cv.predict(X_test)

cv_accuracy.append(accuracy_score(y_test, pred))

cv_accuracy

---------------------

[0.6007692307692307,

0.6884615384615385,

0.7090069284064665,

0.7628945342571208,

0.7867590454195535]# acc의 분산이 작으므로 평균을 사용한다

np.mean(cv_accuracy), np.var(cv_accuracy)

-----------------------------------------

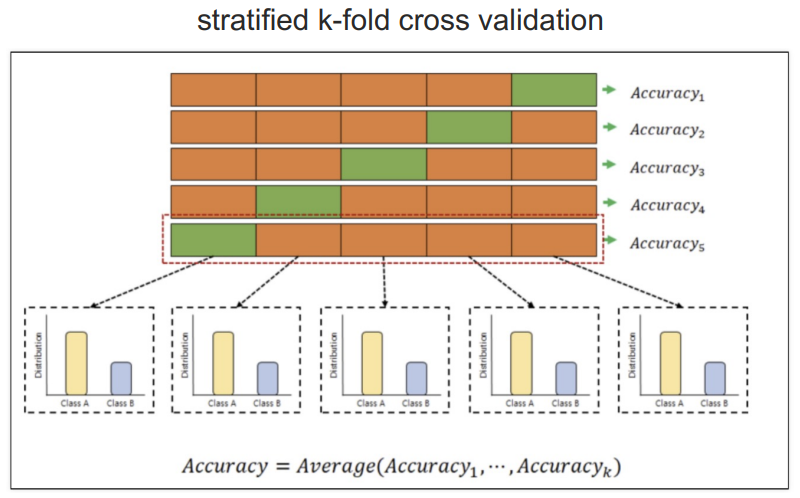

(0.709578255462782, 0.004217029185820937)5-2) Stratified K-Fold 교차검증

- 각 폴드의 비율을 동일하게 분리한다

Stratified KFold 적용 및 평가

# StratifiedKFold

from sklearn.model_selection import StratifiedKFold

from sklearn.model_selection import cross_val_score

skfold = StratifiedKFold(n_splits=5) # 분할 갯수 K 결정

wine_tree_cv = DecisionTreeClassifier(max_depth=2, random_state=13)

cross_val_score(wine_tree_cv, X, y, scoring=None, cv=skfold)

--------------------------------------------------------------------

array([0.55230769, 0.68846154, 0.71439569, 0.73210162, 0.75673595])학습데이터도 포함한 평가

from sklearn.model_selection import cross_validate

cross_validate(wine_tree_cv, X, y, scoring=None, cv=skfold, return_train_score=True)

-------------------------------------------------------------------------------------

{'fit_time': array([0.01097536, 0.00997448, 0.00898337, 0.0089395 , 0.00896764]),

'score_time': array([0.0029881 , 0.00299907, 0.00298595, 0.00203061, 0.00198936]),

'test_score': array([0.55230769, 0.68846154, 0.71439569, 0.73210162, 0.75673595]),

'train_score': array([0.74773908, 0.74696941, 0.74317045, 0.73509042, 0.73258946])}5-3) GridSearchCV

-

하이퍼파라미터의 최적의 값을 도출

-

설정해놓은 값들을 반복해서 넣고 그 결과를 비교하는 방식을 사용하므로 시간이 오래걸린다.

데이터 가져오기

# 와인 데이터 가져오기

import pandas as pd

red_url = 'https://raw.githubusercontent.com/Pinkwink/ML_tutorial/master/dataset/winequality-red.csv'

white_url = 'https://raw.githubusercontent.com/Pinkwink/ML_tutorial/master/dataset/winequality-white.csv'

red_wine = pd.read_csv(red_url, sep=';')

white_wine = pd.read_csv(white_url, sep=';')

red_wine['color'] = 1.

white_wine['color'] = 0.

wine = pd.concat([red_wine, white_wine])

wine['taste'] = [1. if grade>5 else 0. for grade in wine['quality']]

X = wine.drop(['taste', 'quality'], axis=1)

y = wine['taste']GridSearchCV 사용

- 검색대상 : max_depth 계수

from sklearn.model_selection import GridSearchCV

from sklearn.tree import DecisionTreeClassifier

params = {'max_depth' : [2,4,7,10]}

wine_tree = DecisionTreeClassifier(max_depth=2, random_state=13)

gridsearch = GridSearchCV(estimator=wine_tree, param_grid=params, cv=5, n_jobs=7) # cv는 분할갯수

gridsearch.fit(X,y)수행내용 출력

import pprint

pp = pprint.PrettyPrinter(indent=4)

pp.pprint(gridsearch.cv_results_)

---------------------------------------------------------------------------------

{ 'mean_fit_time': array([0.01557207, 0.02707362, 0.03694801, 0.0396534 ]),

'mean_score_time': array([0.00433187, 0.00419917, 0.00400963, 0.00239401]),

'mean_test_score': array([0.6888005 , 0.66356523, 0.65340854, 0.64401587]),

'param_max_depth': masked_array(data=[2, 4, 7, 10],

mask=[False, False, False, False],

fill_value='?',

dtype=object),

'params': [ {'max_depth': 2},

{'max_depth': 4},

{'max_depth': 7},

{'max_depth': 10}],

'rank_test_score': array([1, 2, 3, 4]),

'split0_test_score': array([0.55230769, 0.51230769, 0.50846154, 0.51615385]),

'split1_test_score': array([0.68846154, 0.63153846, 0.60307692, 0.60076923]),

'split2_test_score': array([0.71439569, 0.72363356, 0.68360277, 0.66743649]),

'split3_test_score': array([0.73210162, 0.73210162, 0.73672055, 0.71054657]),

'split4_test_score': array([0.75673595, 0.7182448 , 0.73518091, 0.72517321]),

'std_fit_time': array([0.002706 , 0.00254519, 0.00184818, 0.00089921]),

'std_score_time': array([0.00041986, 0.00115462, 0.00166992, 0.00048907]),

'std_test_score': array([0.07179934, 0.08390453, 0.08727223, 0.07717557])}최적의 모델 검색

print("Best model : ", gridsearch.best_estimator_)

print("Best Score : ", gridsearch.best_score_)

print("Best Params : ", gridsearch.best_params_)

--------------------------------------------------------------------

Best model : DecisionTreeClassifier(max_depth=2, random_state=13)

Best Score : 0.6888004974240539

Best Params : {'max_depth': 2}pipeline 적용한 모델에 GridSearch 사용

from sklearn.pipeline import Pipeline

from sklearn.tree import DecisionTreeClassifier

from sklearn.preprocessing import StandardScaler

estimators = [

('scaler', StandardScaler()),

('clf', DecisionTreeClassifier())

]

pipe = Pipeline(estimators)

param_grid = [{'clf__max_depth':[2,4,7,10]}]

GridSearch = GridSearchCV(estimator=pipe, param_grid=param_grid, cv=5)

GridSearch.fit(X,y)

GridSearch.cv_results_

----------------------------------------------------------------------------

{'mean_fit_time': array([0.01302018, 0.01525412, 0.02469058, 0.03136926]),

'std_fit_time': array([0.00222836, 0.00111415, 0.00097366, 0.00195921]),

'mean_score_time': array([0.00239077, 0.00220132, 0.00239601, 0.00274467]),

'std_score_time': array([0.00049056, 0.00041199, 0.00049911, 0.00037043]),

'param_clf__max_depth': masked_array(data=[2, 4, 7, 10],

mask=[False, False, False, False],

fill_value='?',

dtype=object),

'params': [{'clf__max_depth': 2},

{'clf__max_depth': 4},

{'clf__max_depth': 7},

{'clf__max_depth': 10}],

'split0_test_score': array([0.55230769, 0.51230769, 0.50769231, 0.51692308]),

'split1_test_score': array([0.68846154, 0.63153846, 0.60461538, 0.61153846]),

'split2_test_score': array([0.71439569, 0.72363356, 0.67667436, 0.67205543]),

'split3_test_score': array([0.73210162, 0.73210162, 0.73672055, 0.71439569]),

'split4_test_score': array([0.75673595, 0.7182448 , 0.73518091, 0.72286374]),

'mean_test_score': array([0.6888005 , 0.66356523, 0.6521767 , 0.64755528]),

'std_test_score': array([0.07179934, 0.08390453, 0.08691987, 0.07629056]),

'rank_test_score': array([1, 2, 3, 4])}표로 정리

import pandas as pd

score_df = pd.DataFrame(GridSearch.cv_results_)

score_df[['params', 'rank_test_score', 'mean_test_score', 'std_test_score']]

-----------------------------------------------------------------------------

params rank_test_score mean_test_score std_test_score

0 {'clf__max_depth': 2} 1 0.688800 0.071799

1 {'clf__max_depth': 4} 2 0.663565 0.083905

2 {'clf__max_depth': 7} 3 0.652177 0.086920

3 {'clf__max_depth': 10} 4 0.647555 0.076291