PyTorch

- pytorch는 tensor를 이용하여 모델의 입력, 출력, parameters를 encode 한다.

import torchtensor

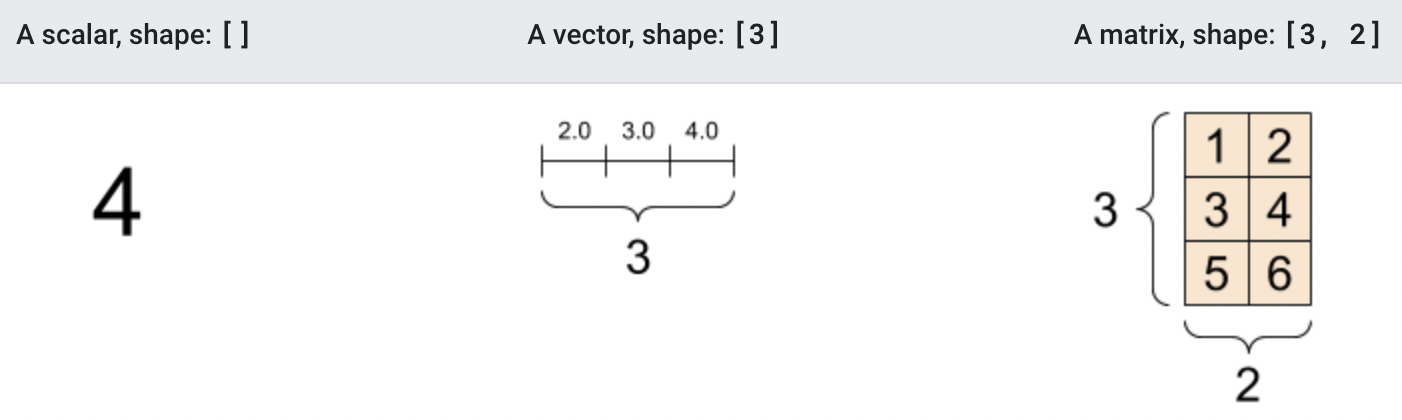

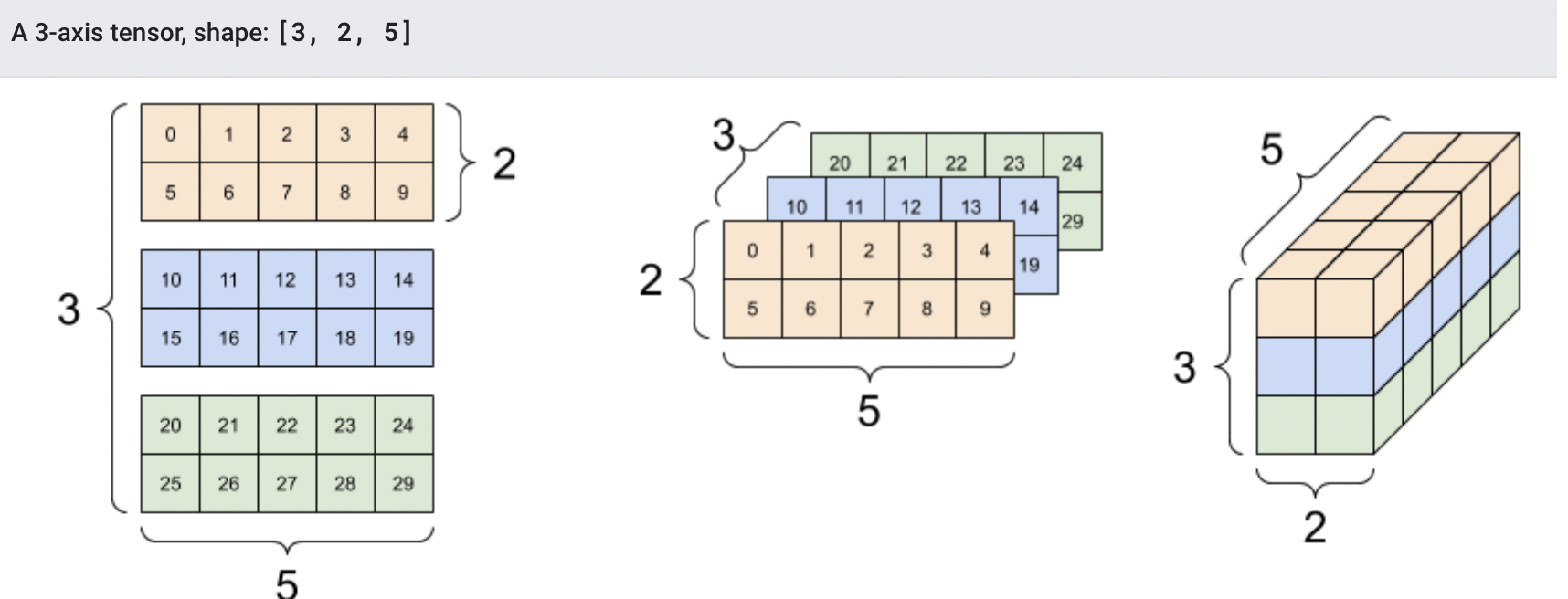

- tensor

: NumPy의 (n차원)배열, ndarray 객체와 유사하지만 GPU등 HW가속기에서 실행 할 수 있는 자료구조.

initializing tensor _ tensor 생성(초기화) 하기

- data로

data = [[1,2],[3,4]]

t_data = torch.tensor(data)

t_data

# tensor([[1, 2],

# [3, 4]])- numpy array로

np_array = np.array(data)

t_np = torch.from_numpy(np_array)

- tensor -> numpy array

n = t.numpy()

- 다른 tensor로

- shape과 datatype이 유지된다. 바꾸려면 override해줘야 한다.

t_ones = torch.ones_like(t_data) # retains the properties of t_data

print(f"Ones Tensor: \n {t_ones} \n")

t_rand = torch.rand_like(t_data, dtype=torch.float) # overrides the datatype of x_data

print(f"Random Tensor: \n {t_rand} \n")

# Ones Tensor:

# tensor([[1, 1],

# [1, 1]])

#

# Random Tensor:

# tensor([[0.6358, 0.6764],

# [0.1651, 0.1054]])- 임의의 초기값으로 생성 (rand / 1 / 0)

shape = (2,3,)

rand_tensor = torch.rand(shape)

ones_tensor = torch.ones(shape)

zeros_tensor = torch.zeros(shape)

print(f"Random Tensor: \n {rand_tensor} \n")

print(f"Ones Tensor: \n {ones_tensor} \n")

print(f"Zeros Tensor: \n {zeros_tensor}")

# Random Tensor:

# tensor([[0.5408, 0.3814, 0.5961],

# [0.9032, 0.5551, 0.8377]])

# Ones Tensor:

# tensor([[1., 1., 1.],

# [1., 1., 1.]])

# Zeros Tensor:

# tensor([[0., 0., 0.],

# [0., 0., 0.]])tensor = torch.rand(3,4) #rand()함수에 size값을 바로 전달해도 된다.

tensor

# tensor([[0.7525, 0.1220, 0.6535, 0.9239],

# [0.8900, 0.9324, 0.1735, 0.6834],

# [0.3467, 0.8354, 0.8760, 0.2777]])tensor 연산

GPU 할당하기

if torch.cuda.is_available(): tensor = tensor.to("cuda") # cuba가 available하면 GPU로 텐서를 명시적으로 이동

2차원 배열 슬라이싱

- tensor[:3,2] _ 0~2row, 2col

(tensor[:3][2] - X)- tensor[1] _ 2nd row

- tensor[:,1] _ 2nd col

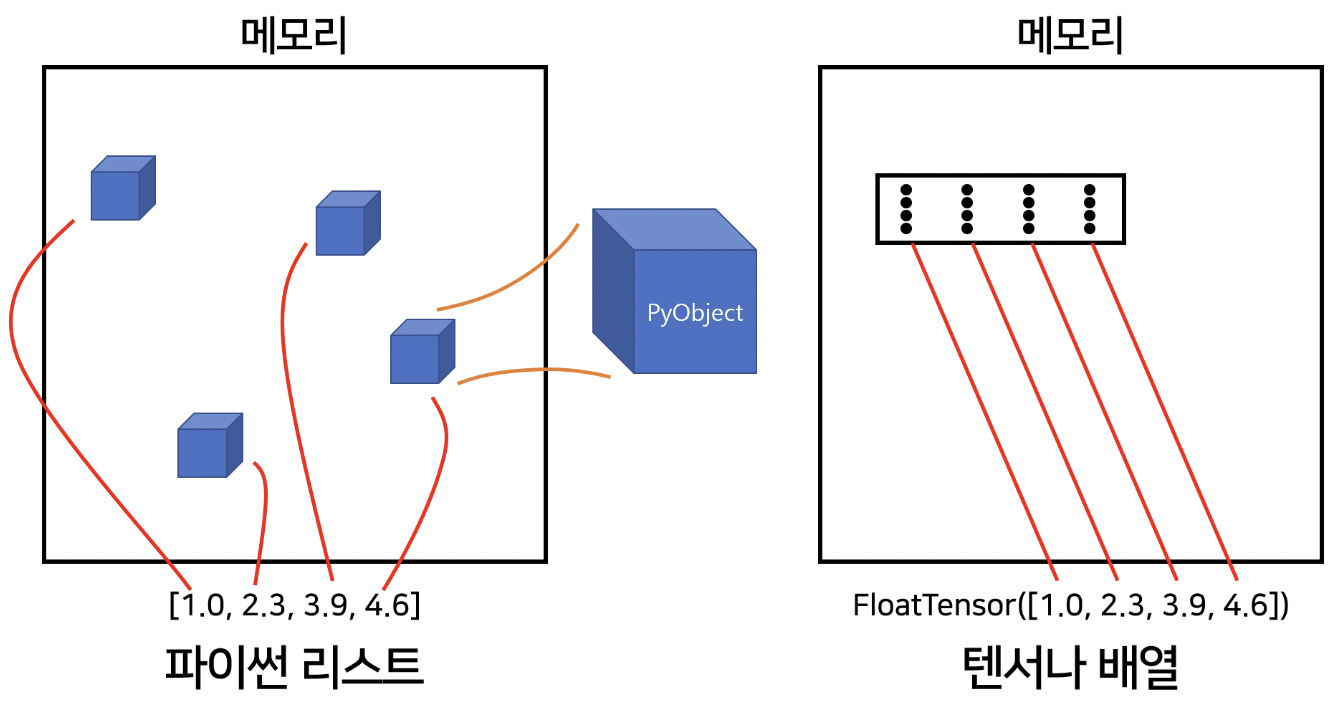

python list vs tensor

- python list는 object 포인터들을 모아놓은 집합일 뿐이다.

- python은 메모리 할당에 있어 비효율적이고, 느리다.

- numpy array와 tensor는 메모리상 인접한 곳에 공간이 할당된다.(c언어 자료형)

(출처: https://hiddenbeginner.github.io/deeplearning/2020/01/21/pytorch_tensor.html)