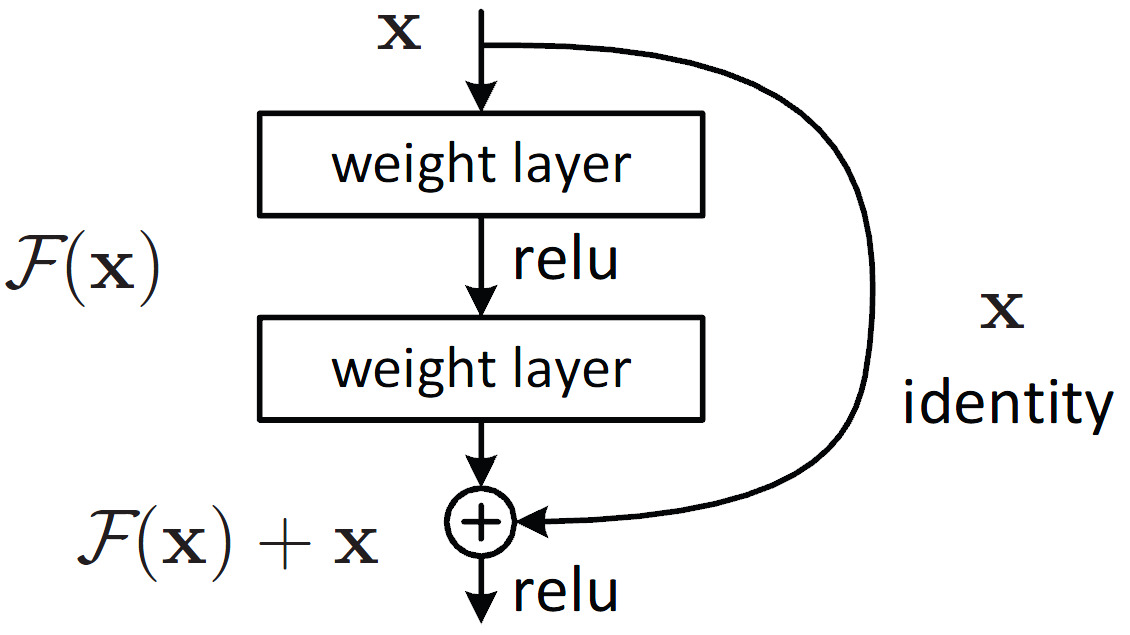

Residual networks (Resnets) have become a prominent architecture in deep learning.

However, a comprehensive understanding of Resnets is still a topic of ongoing research. A recent view argues that Resnets perform iterative refinement of features.

We attempt to further expose properties of this aspect.

To this end, we study Resnets both analytically and empirically.

We formalize the notion of iterative refinement in Resnets by showing that residual connections naturally encourage features of residual blocks to move along the negative gradient of loss as we go from one block to the next.

In addition, our empirical analysis suggests that Resnets are able to perform both representation learning and iterative refinement.

In general, a Resnet block tends to concentrate representation learning behavior in the first few layers while higher layers perform iterative refinement of features.

Finally we observe that sharing residual layers naively leads to representation explosion and counterintuitively, overfitting, and we show that simple existing strategies can help alleviating this problem.

Jastrzębski, Stanisław, et al. "Residual connections encourage iterative inference." arXiv preprint arXiv:1710.04773 (2017).

논문 원문