CHAPTER 3. 신경망

3.1 퍼셉트론에서 신경망으로

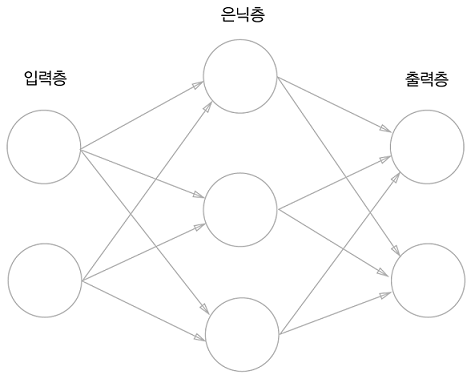

3.1.1 신경망의 예

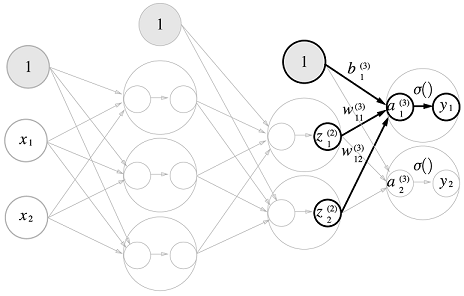

- 가장 왼쪽부터 입력층 - 은닉층 - 출력층

- 해당 이미지에서 0층은 입력층, 1층은 은닉층, 2층은 출력층

- 입력층이나 출력층과 달리, 은닉층의 뉴런은 사람 눈에 보이지 않는다.

3.1.2 퍼셉트론 복습

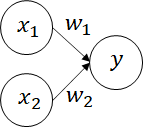

- 과 라는 두 입력 신호를 받아서 를 출력하는 퍼셉트론

- 해당 퍼셉트론을 수식으로 나타내면 다음과 같음

- 는 편향을 나타내는 매개변수로, 뉴런이 얼마나 쉽게 활성화되느냐를 제어

- 과 는 각 신호의 가중치를 나타내는 매개변수로, 각 신호의 영향력을 제어

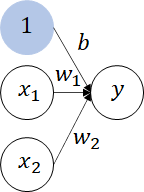

- 위의 이미지는 편향 를 명시하지 않은 퍼셉트론 이미지이고, 편향 를 명시한다면 다음과 같이 나타낼 수 있음

- 편향을 의미하는, 가중치가 이고 입력이 인 뉴련을 추가

- 해당 퍼셉트론의 동작은, 이라는 3개의 신호가 뉴런에 입력되어, 각 신호에 가중치를 곱한 후, 다음 뉴런에 전달

- 다음 뉴런에서는 전달받은 신호의 값을 더하여, 그 합이 을 넘으면 을 출력하고, 그렇지 않으면 을 출력

- 해당 퍼셉트론을 수식으로 표현하면 다음과 같음

3.1.3 활성화 함수의 등장

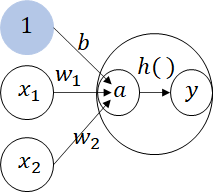

- 입력 신호의 총합을 출력 신호로 변환하는 함수를 일반적으로 활성화 함수(activation function)

- 활성화 함수는 입력 신호의 총합이 활성화를 일으키는지를 정하는 역할

- 위의 식은, 가중치가 달린 입력 신호와 편향의 총합 를 계산하고, 이를 함수 에 넣어 를 출력하는 흐름

- 기존 뉴런의 원을 키우고, 내부에 활성화 함수의 처리 과정을 명시

- 뉴런을 그릴 때 보통은 왼쪽처럼 하나의 원으로 표시하지만, 신경망의 동작을 더 명확히 드러내고자 할 때는 오른쪽처럼 활성화 처리 과정을 명시

3.2 활성화 함수

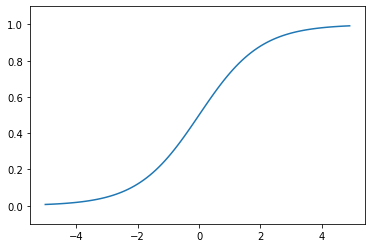

3.2.1 시그모이드 함수(sigmoid function)

- 시그모이드 함수는 신경망에서 자주 이용하는 활성화 함수로, 다음 식과 같이 표현

- 신경망에서 활성화 함수로 시그모이드 함수를 이용하여 신호를 변환하고, 그 변환된 신호를 다음 뉴런에 전달

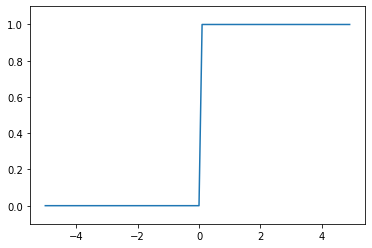

3.2.2 계단 함수 구현하기

- 계단 함수란, 입력이 0을 넘으면 1을 출력하고, 그 외에는 0을 출력하는 함수

def step_function(x):

if x > 0:

return 1

else:

return 0- 다음과 같이 numpy를 이용하여 간단하게 구현 가능

import numpy as np

def step_function(x):

y = x > 0

return y.astype(np.int) # 입력된 bool형을 int형으로 변환3.2.3 계단 함수의 그래프

import numpy as np

import matplotlib.pyplot as plt

def step_function(x):

return np.array(x > 0, dtype=np.int)

x = np.arange(-5.0, 5.0, 0.1)

y = step_function(x)

plt.plot(x, y)

plt.ylim(-0.1, 1.1)

plt.show()

3.2.4 시그모이드 함수 구현하기

import numpy as np

import matplotlib.pyplot as plt

def sigmoid_function(x):

return 1 / (1 + np.exp(-x))

x = np.arange(-5.0, 5.0, 0.1)

y = sigmoid_function(x)

plt.plot(x, y)

plt.ylim(-0.1, 1.1)

plt.show()

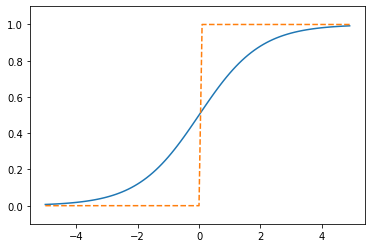

3.2.5 시그모이드 함수와 계단 함수 비교

- 함수의 형태

- 시그모이드 함수는 부드러운 곡선 형태이며, 입력에 따라 출력이 연속적으로 변화

- 계단 함수는 을 경계로 출력이 급격하게 변화

- 시그모이드 함수의 매끈함이 신경망 학습에서 아주 중요한 역할을 함

- 출력 값의 형태

- 시그모이드 함수는 범위 내의 실수를 반환

- 계단 함수는 또는 을 반환

- 즉, 퍼셉트론에서는 혹은 이 흘렀다면, 신경망에서는 연속적인 실수가 흐름

- 두 함수의 공통점

- 입력이 작을 때의 출력은 에 가깝고, 입력이 커지면 출력이 에 가까워지는 구조

- 출력은 항상 에서 사이

3.2.6 비선형 함수

- 계단 함수와 시그모이드 함수의 또 다른 공통점은, 두 함수 모두 비선형 함수

- 신경망에서는 활성화 함수로 비선형 함수를 사용해야함

- 선형 함수의 경우, 층을 아무리 깊게 만들어도 '은닉층이 없는 네트워크'로도 똑같은 기능을 할 수 있음

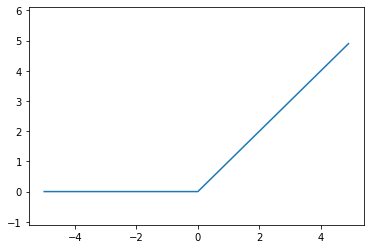

3.2.7 ReLU 함수

- 최근에는 활성화 함수로 ReLU(Rectified Linear Unit) 함수를 주로 이용

- RuLU는 입력이 을 넘으면 그 입력을 그대로 출력하고, 이하면 을 출력하는 함수

def relu(x):

return np.maximum(0, x)

x = np.arange(-5.0, 5.0, 0.1)

y1 = relu(x)

plt.plot(x, y1)

plt.ylim(-1.1, 6.1)

plt.show()

3.3 다차원 배열의 계산

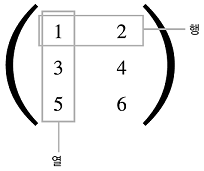

3.3.1 다차원 배열

- 다차원 배열의 기본은 '숫자의 집합'

- 2차원 배열은 특히 행렬(matrix)이라고 부르고, 배열의 가로 방향을 행(row), 세로 방향을 열(column)이라고 함

import numpy as np

# 1차원 배열

a = np.array([1, 2, 3, 4])

print(a) # [1 2 3 4]

print(np.ndim(a)) # 1

print(a.shape) # (4,)

print(a.shape[0]) # 4

b = np.array([[1, 2], [3, 4], [5, 6]])

print(b) # [[1 2] [3 4] [5 6]]

print(np.ndim(b)) # 2

print(b.shape) # (3, 2)

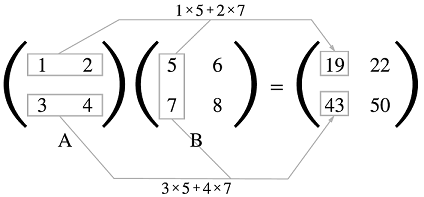

print(b.shape[0]) # 33.3.2 행렬의 내적(행렬 곱)

- 2X2 행렬의 내적은 다음과 같이 구함

import numpy as np

a = np.array([[1, 2], [3, 4]])

b = np.array([[5, 6], [7, 8]])

np.dot(a, b)-

np.dot(): 입력이 1차원 배열이면 벡터를, 2차원 배열이면 행렬 곱을 계산

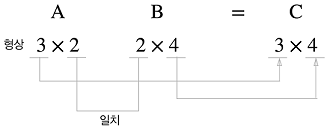

-

다차원 배열을 곱하려면 두 행렬의 대응하는 차원의 요소 수를 일치시켜야 함

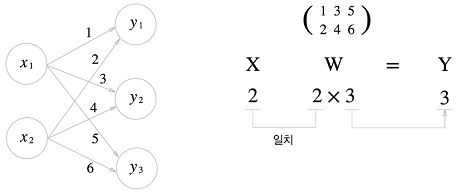

3.3.3 신경망의 내적

- 해당 신경망은 편향과 활성화 함수를 생략하고 가중치만 갖고 있음

import numpy as np

x = np.array([1, 2])

w = np.array([[1, 3, 5], [2, 4, 6]])

y = np.dot(x, w)

print(y) # [5 11 17]3.4 3층 신경망 구현하기

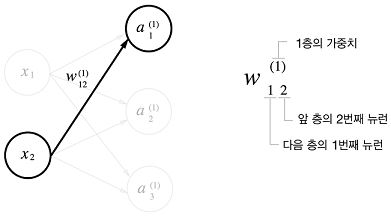

3.4.1 표기법 설명

- 입력층의 뉴런 에서 다음 층의 뉴런 으로 향하는 선 위에 가중치를 표시

- 에서, (1)은 1층의 가중치, 1층의 뉴런임을 뜻하는 번호

- 가중치 오른쪽 아래의 두 숫자는 차례대로 다음 층 뉴런과 앞 층 뉴런의 인덱스 번호

- 즉, 뉴런에서 다음 층의 로 향할 때의 가중치라는 뜻

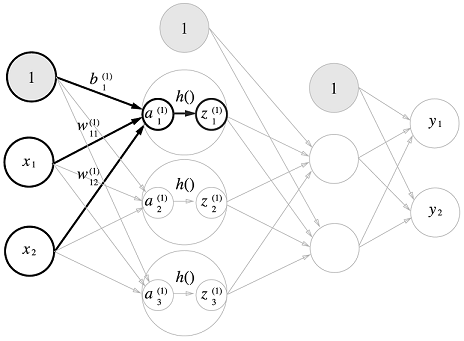

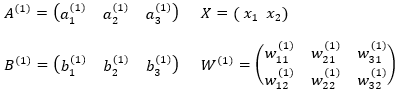

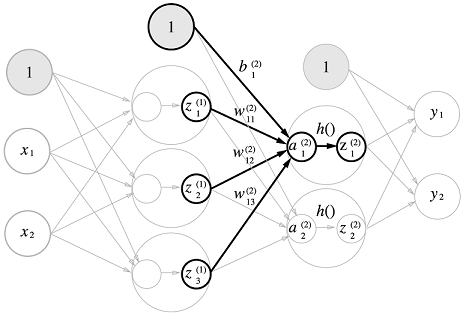

3.4.2 각 층의 신호 전달 구현하기

- 은닉층에서의 가중치의 총합을 로 표기하고 활성화 함수 로 변환된 신호 로 표현

- 1층의 가중치의 총합을 행렬식으로 간소화

import numpy as np

# 입력층에서 1층으로 신호 전달

X = np.array([1.0, 0.5])

W1 = np.array([[0.1, 0.3, 0.5], [0.2, 0.4, 0.6]])

B1 = np.array([0.1, 0.2, 0.3])

print(W1.shape) # (2, 3)

print(X.shape) # (2,)

print(B1.shape) # (3,)

A1 = np.dot(X, W1) + B1

Z1 = sigmoid_function(A1)

print(A1) # [0.3, 0.7, 1.1]

print(Z1) # [0.57444252, 0.66818777, 0.75026011]

# 1층에서 2층으로 신호 전달

W2 = np.array([[0.1, 0.4], [0.2, 0.5], [0.3, 0.6]])

B2 = np.array([0.1, 0.2])

A2 = np.dot(Z1, W2) + B2

Z2 = sigmoid_function(A2)

# 2층에서 출력층으로 신호 전달

# 출력층의 활성화 함수로, identity_function을 이용

def identity_function(x):

return x

W3 = np.array([[0.1, 0.3], [0.2, 0.4]])

B3 = np.array([0.1, 0.2])

A3 = np.dot(Z2, W3) + B3

Y = identity_function(A3) # 혹은 Y = A33.4.3 구현 정리

# 가중치와 편향을 초기화하고 이를 딕셔너리 변수 network에 저장

# 딕셔너리 변수 network에는 각 층에서 필요한 매개변수(가중치와 편향)을 저장

def init_network():

network = {}

network['W1'] = np.array([[0.1, 0.3, 0.5], [0.2, 0.4, 0.6]])

network['b1'] = np.array([0.1, 0.2, 0.3])

network['W2'] = np.array([[0.1, 0.4], [0.2, 0.5], [0.3, 0.6]])

network['b2'] = np.array([0.1, 0.2])

network['W3'] = np.array([[0.1, 0.3], [0.2, 0.4]])

network['b3'] = np.array([0.1, 0.2])

return network

# 입력 신호를 출력으로 변환하는 처리 과정 구현

# 신호가 순방향(입력에서 출력 방향)으로 전달됨(순전파)

def forward(network, x):

W1, W2, W3 = network['W1'], network['W2'], network['W3']

b1, b2, b3 = network['b1'], network['b2'], network['b3']

a1 = np.dot(x, W1) + b1

z1 = sigmoid_function(a1)

a2 = np.dot(z1, W2) + b2

z2 = sigmoid_function(a2)

a3 = np.dot(z2, W3) + b3

y = identity_function(a3)

return y

network = init_network()

x = np.array([1.0, 0.5])

y = forward(network, x)

print(y) # [ 0.31682708 0.69627909]3.5 출력층 설계하기

- 신경망은 분류와 회귀 모두에 이용할 수 있음

- 둘 중 어떤 문제냐에 따라 출력층에서 사용하는 활성화 함수가 달라짐

- 일반적으로 회귀에는 항등 함수를, 분류에는 소프트맥스 함수를 사용

3.5.1 항등 함수와 소프트맥스 함수 구현하기

- 항등 함수(identity function)은 입력을 그대로 출력

- 소프트맥스 함수(softmax function)의 식은 다음과 같음

- 은 출력층의 뉴런 수, 는 그중 번째 출력

- 분자는 입력 신호 의 지수 함수, 분모는 모든 입력 신호의 지수 함수의 합으로 구성

def softmax(a):

exp_a = np.exp(a)

sum_exp_a = np.sum(exp_a)

y = exp_a / sum_exp_a

return y3.5.2 소프트맥수 함수 구현 시 주의점

-

소프트맥스 함수는 지수 함수를 사용하는데, 이로 인해서 오버플로 문제가 발생해 수치가 불안정해질수 있는 문제점이 있음

-

오버플로 문제를 해결하기위해 소프트맥스 함수 개선

a = np.array([1010, 1000, 990])

#print(np.exp(a) / np.sum(np.exp(a)))

c = np.max(a) # c = 1010 (최댓값)

print(a-c) # a-c = array([0, -10, -20])

print(np.exp(a-c) / np.sum(np.exp(a-c)))- 소프트맥수의 지수 함수를 계산할 때, 어떤 정수를 더하거나 빼도 결과는 바뀌지 않음

- 에 어떤 값을 대입해도 상관없지만, 오버플로를 막기 위해 입력 신호 중 최댓값을 이용하는 것이 일반적

def softmax(a):

c = np.max(a)

exp_a = np.exp(a - c)

sum_exp_a = np.sum(exp_a)

y = exp_a / sum_exp_a

return y3.5.3 소프트맥수 함수의 특징

- 소프트맥수 함수의 출력은 0에서 1.0 사이의 실수

- 출력의 총합이 1 -> 확률로 해석이 가능

- 지수 함수가 단조 증가 함수이기 때문에, 소프트맥스 함수를 적용해도 각각의 원소의 대소 관계는 변하지 않음

- 결과적으로 신경망을 분류할 때, 출력층의 소프트맥스 함수를 생략하는 것이 가능

3.5.4 출력층의 뉴런 수 정하기

- 출력층의 뉴런 수는 문제에 맞게 적절히 정해야 함

- 분류에서는 분류하고 싶은 클래스 수로 설정하는 것이 일반적

3.6 손글씨 숫자 인식

3.6.1 MNIST 데이터셋

- 28X28 크기의 회색조 이미지 (1채널)

- 각 픽셀은 0~255까지의 값

- 각 이미지에는 실제 의미하는 숫자가 레이블로 붙어 있음

import sys, os

sys.path.append(os.pardir)

from dataset.mnist import load_mnist

(x_train, t_train), (x_test, t_test) = load_mnist(flatten=True, normalize=False)

print(x_train.shape) # (60000, 784)

print(t_train.shape) # (60000,)

print(x_test.shape) # (10000, 784)

print(t_test.shape) # (10000,)import sys, os

sys.path.append(os.pardir)

import numpy as np

from dataset.mnist import load_mnist

from PIL import image

def img_show(img):

pil_img = Image.fromarray(np.uint8(img))

pil_img.show()

(x_train, t_train), (x_test, t_test) = load_mnist(flatten=True, normalize=False)

img = x_train[0]

label = t_train[0]

print(label)

print(img.shape)

img = img.reshape(28, 28)

print(img.shape)

img_show(img)3.6.2 신경망의 추론 처리

import numpy as np

import pickle

from dataset.mnist import load_mnist

from common.functions import sigmoid, softmax

def get_data():

(x_train, t_train), (x_test, t_test) = load_mnist(normalize=True, flatten=True, one_hot_label=False)

return x_test, t_test

def init_network():

with open("sample_weight.pkl", 'rb') as f:

network = pickle.load(f)

return network

def predict(network, x):

W1, W2, W3 = network['W1'], network['W2'], network['W3']

b1, b2, b3 = network['b1'], network['b2'], network['b3']

a1 = np.dot(x, W1) + b1

z1 = sigmoid(a1)

a2 = np.dot(z1, W2) + b2

z2 = sigmoid(a2)

a3 = np.dot(z2, W3) + b3

y = softmax(a3)

return y

x, t = get_data()

network = init_network()

accuracy_cnt = 0

for i in range(len(x)):

y = predict(network, x[i])

p = np.argmax(y)

if p == t[i]:

accuracy_cnt += 1

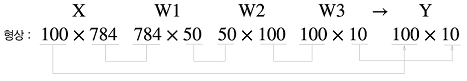

print("Accuracy: "+str(float(accuracy_cnt) / len(x)))3.6.3 배치 처리

- 이미지 여러 장을 한꺼번에 입력하는 경우

- 배치(batch): 하나로 묶은 입력 데이터

x, t = get_data()

network = init_network()

batch_size = 100

accuracy_cnt = 0

for i in range(0, len(x), batch_size):

x_batch = x[i:i+batch_size]

y_batch = predict(network, x_batch)

p = np.argmax(y_batch, axis=1)

accuracy_cnt += np.sum(p == t[i:i+batch_size])

print("Accuracy: " + str(float(accuracy_cnt) / len(x)))- 배치를 사용하면, 처리 시간을 줄일 수 있음

3.7 정리

- 신경망에서는 활성화 함수로 시그모이드 함수와 ReLU 함수 같은 매끄럽게 변화하는 함수를 이용

- 넘파이의 다차원 배열을 잘 사용하면 신경망을 효율적으로 구현할 수 있음

- 기계학습 문제는 크게 회귀와 분류로 나뉨

- 출력층의 활성화 함수로는 회귀에서는 주로 항등 함수를, 분류에서는 주로 소프트맥스 함수를 이용

- 분류에서는 출력층의 뉴런 수를 분류하려는 클래스 수와 같게 설정

- 입력 데이터를 묶은 것을 배치라 하며, 추론 처리를 이 배치 단위로 진행하면 결과를 훨씬 빠르게 얻을 수 있음