Hadoop 설치

- 다음 링크에 들어가 hadoop을 설치해준다.

https://velog.io/@dldydrhkd/Hadoop-%EC%99%84%EC%A0%84-%EB%B6%84%EC%82%B0-%EB%AA%A8%EB%93%9C-%EC%84%A4%EC%B9%98

java 설치

- java를 설치한다.

yum install java-11-openjdk-devel.x86_64Spark 설치

-

master 서버에 spark 폴더를 하나 만들어준다.

cd /opt mkdir spark cd spark -

다음 link에 들어가 spark를 설치하고 압축을 해제한다.

https://archive.apache.org/dist/spark/spark-2.4.3/wget https://archive.apache.org/dist/spark/spark-2.4.3/spark-2.4.3-bin-hadoop2.7.tgz tar -xzvf spark-2.4.3-bin-hadoop2.7.tgz mv spark-2.4.3-bin-hadoop2.7 spark-2.4.3

경로 설정

- spark의 경로 설정을 위해 bashrc 파일을 연다

vim ~/.bashrc - 다음과 같이 경로를 추가한다.

export SPARK_HOME=/opt/spark/spark-2.4.3 export PATH=$PATH:$SPARK_HOME/bin:$SPARK_HOME/sbin - 수정된 사항을 적용 시킨다.

source ~/.bashrc

Spark 환경 설정

- spark-env.sh 파일을 열어준다.

cd $SPARK_HOME/conf

vim spark-env.sh- 다음과 같이 작성해준다.

export SPARK_HOME=/opt/spark/spark-2.4.3

export HADOOP_HOME=/opt/hadoop/hadoop-2.7.0

export HADOOP_CONFIG_DIR=$HADOOP_HOME/etc/hadoop

MAPR_HADOOP_CLASSPATH=`hadoop classpath`:/opt/mapr/lib/slf4j-log4j12-1.7.5.jar:

#MAPR_HADOOP_JNI_PATH=`hadoop jnipath`

MAPR_HADOOP_JNI_PATH=/opt/hadoop/hadoop-2.7.0/lib/native::/opt/hadoop/hadoop-2.7.0/lib/native

export SPARK_LIBRARY_PATH=$MAPR_HADOOP_JNI_PATH

MAPR_SPARK_CLASSPATH="$MAPR_HADOOP_CLASSPATH"

SPARK_DIST_CLASSPATH=$MAPR_SPARK_CLASSPATH

export SPARK_DIST_CLASSPATH

# Security status

# source /opt/mapr/conf/env.sh

if [ "$MAPR_SECURITY_STATUS" = "true" ]; then

SPARK_SUBMIT_OPTS="$SPARK_SUBMIT_OPTS -Dmapr_sec_enabled=true"

fi

MAPR_SPARK_CLASSPATH="$MAPR_HADOOP_CLASSPATH:$MAPR_HBASE_CLASSPATH"

export SPARK_DAEMON_MEMORY=4g

export SPARK_WORKER_MEMORY=32g

export SPARK_MASTER_IP=master

export JAVA_HOME=/usr/lib/jvm/java-11-openjdk-11.0.20.0.8-1.el7_9.x86_64

export PATH=$PATH:$JAVA_HOME/bin

export CLASSPATH="."

MAPR_SPARK_CLASSPATH="$MAPR_HADOOP_CLASSPATH:$MAPR_HBASE_CLASSPATH"

SPARK_WORKER_OPTS="-Dspark.worker.cleanup.enabled=true"

SPARK_DAEMON_JAVA_OPTS="-Dspark.deploy.recoveryMode=ZOOKEEPER -Dspark.deploy.zookeeper.url=master:2181,worker1:2181,worker2:2181,worker3:2181 -Dspark.deploy.zookeeper.dir=/spark"배포

-

master에 설치하고 설정한 spark를 worker들에게 배포한다.

# worker1로 전송 scp -r /opt/spark/spark-2.4.3 hadoop@worker1:/opt/spark # worker2로 전송 scp -r /opt/spark/spark-2.4.3 hadoop@worker2:/opt/spark # worker3로 전송 scp -r /opt/spark/spark-2.4.3 hadoop@worker3:/opt/spark

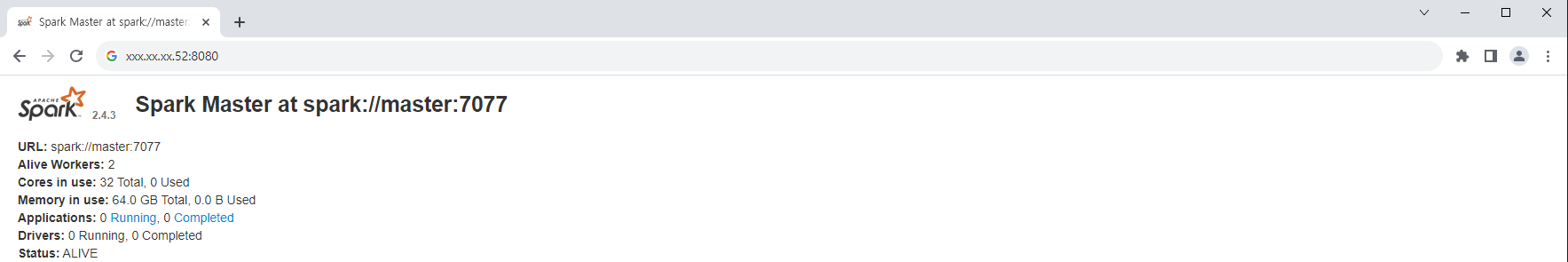

Spark 실행

-

master 서버에서 다음 명령어를 실행한다.

start-master.sh -

xx.xxx.xxx.xx:8080에 들어가 URL을 복사한다.

-

각 worker 서버에서 다음 명령어를 실행한다.

start-slave.sh spark://master:7077복사한 URL을 뒤에 붙여준다.

-

다음과 같이 나오면 성공